Older versions of Wheel files can be obtained from the Previous version download script (GoogleDrive).

Prebuilt binary with Tensorflow Lite enabled. For RaspberryPi. Since the 64-bit OS for RaspberryPi has been officially released, I stopped building Wheel in armhf. If you need Wheel for armhf, please use this. TensorflowLite-bin

Support for Flex Delegate.- Support for XNNPACK.

- Support for XNNPACK

Half-precision Inference Doubles On-Device Inference Performance.

Python API packages

| Device | OS | Distribution | Architecture | Python ver | Note |

|---|---|---|---|---|---|

| RaspberryPi3/4 | Raspbian/Debian | Stretch | armhf / armv7l | 3.5.3 | 32bit, glibc2.24 |

| RaspberryPi3/4 | Raspbian/Debian | Buster | armhf / armv7l | 3.7.3 / 2.7.16 | 32bit, glibc2.28 |

| RaspberryPi3/4 | RaspberryPiOS/Debian | Buster | aarch64 / armv8 | 3.7.3 | 64bit, glibc2.28 |

| RaspberryPi3/4 | Ubuntu 18.04 | Bionic | aarch64 / armv8 | 3.6.9 | 64bit, glibc2.27 |

| RaspberryPi3/4 | Ubuntu 20.04 | Focal | aarch64 / armv8 | 3.8.2 | 64bit, glibc2.31 |

| RaspberryPi3/4,PiZero | Ubuntu 21.04/Debian/RaspberryPiOS | Hirsute/Bullseye | aarch64 / armv8 | 3.9.x | 64bit, glibc2.33/glibc2.31 |

| RaspberryPi3/4 | Ubuntu 22.04 | Jammy | aarch64 / armv8 | 3.10.x | 64bit, glibc2.35 |

| RaspberryPi4/5,PiZero | Debian/RaspberryPiOS | Bookworm | aarch64 / armv8 | 3.11.x | 64bit, glibc2.36 |

Minimal configuration stand-alone installer for Tensorflow Lite. https://github.com/PINTO0309/TensorflowLite-bin.git

Python 2.x / 3.x + Tensorflow v1.15.0

| .whl | 4Threads | Note |

|---|---|---|

| tensorflow-1.15.0-cp35-cp35m-linux_armv7l.whl | ○ | Raspbian/Debian Stretch, glibc 2.24 |

| tensorflow-1.15.0-cp27-cp27mu-linux_armv7l.whl | ○ | Raspbian/Debian Buster, glibc 2.28 |

| tensorflow-1.15.0-cp37-cp37m-linux_armv7l.whl | ○ | Raspbian/Debian Buster, glibc 2.28 |

| tensorflow-1.15.0-cp37-cp37m-linux_aarch64.whl | ○ | Debian Buster, glibc 2.28 |

Python 3.x + Tensorflow v2

*FD = FlexDelegate, **XP = XNNPACK Float16 boost, ***MP = MediaPipe CustomOP, ****NP = Numpy

| .whl | FD | XP | MP | NP | Note |

|---|---|---|---|---|---|

| tensorflow-2.15.0.post1-cp39-none-linux_aarch64.whl | ○ | 1.26 | Ubuntu 21.04 glibc 2.33, Debian Bullseye glibc 2.31 | ||

| tensorflow-2.15.0.post1-cp310-none-linux_aarch64.whl | ○ | 1.26 | Ubuntu 22.04 glibc 2.35 | ||

| tensorflow-2.15.0.post1-cp311-none-linux_aarch64.whl | ○ | 1.26 | Debian Bookworm glibc 2.36 |

【Appendix】 C Library + Tensorflow v1.x.x / v2.x.x

The behavior is unconfirmed because I do not have C language implementation skills. Official tutorial on Tensorflow C binding generation

Appx1. C-API build procedure Native build procedure of Tensorflow v2.0.0 C API for RaspberryPi / arm64 devices (armhf / aarch64)

Appx2. C-API Usage

$ wget https://raw.githubusercontent.com/PINTO0309/Tensorflow-bin/main/C-library/2.2.0-armhf/install-buster.sh

$ ./install-buster.sh| Version | Binary | Note |

|---|---|---|

| v1.15.0 | C-library/1.15.0-armhf/install-buster.sh | Raspbian/Debian Buster, glibc 2.28 |

| v1.15.0 | C-library/1.15.0-aarch64/install-buster.sh | Raspbian/Debian Buster, glibc 2.28 |

| v2.2.0 | C-library/2.2.0-armhf/install-buster.sh | Raspbian/Debian Buster, glibc 2.28 |

| v2.3.0 | C-library/2.3.0-aarch64/install-buster.sh | RaspberryPiOS/Raspbian/Debian Buster, glibc 2.28 |

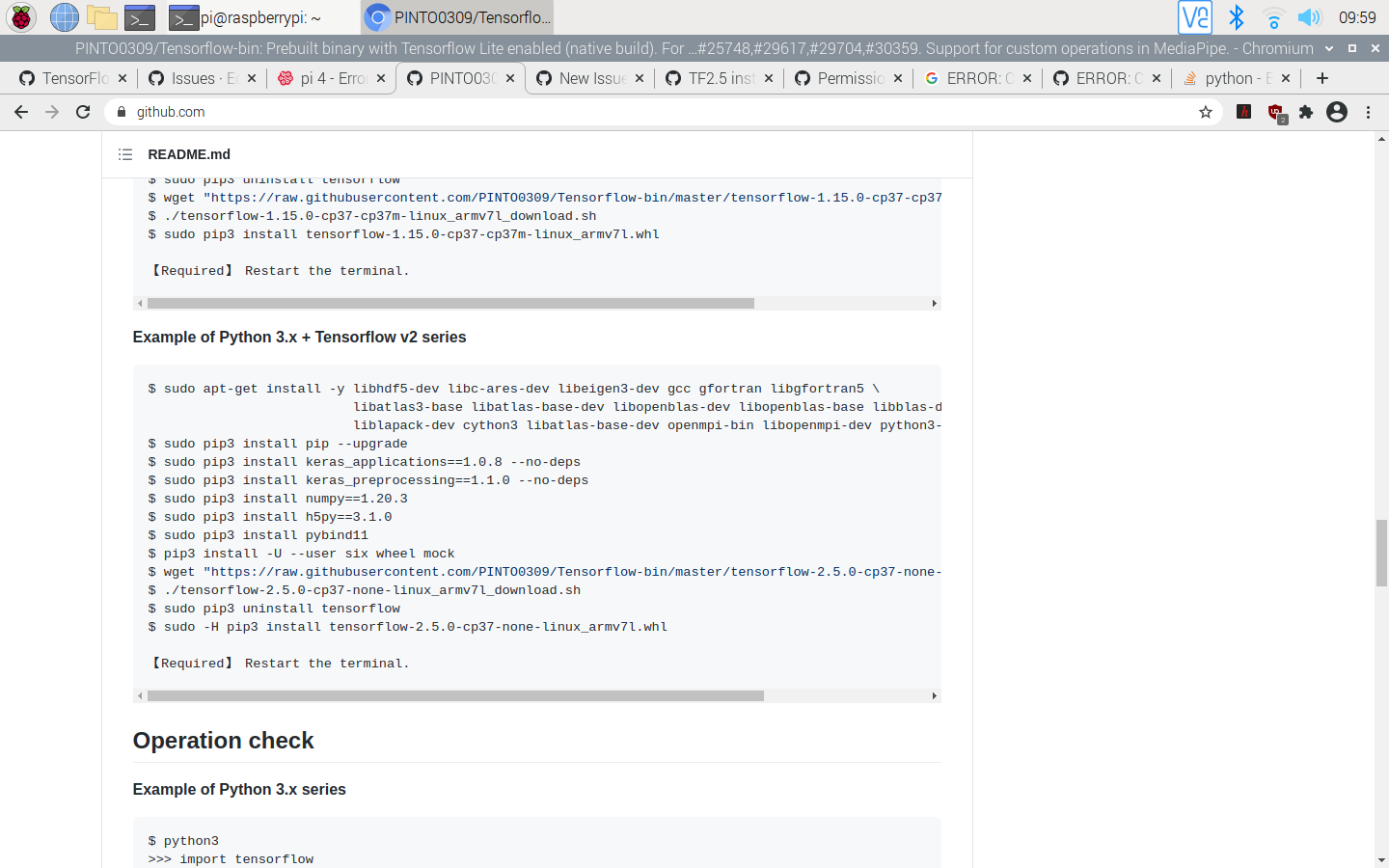

Example of Python 3.x + Tensorflow v1 series

$ sudo apt-get install -y \

libhdf5-dev libc-ares-dev libeigen3-dev gcc gfortran \

libgfortran5 libatlas3-base libatlas-base-dev \

libopenblas-dev libopenblas-base libblas-dev \

liblapack-dev cython3 openmpi-bin libopenmpi-dev \

libatlas-base-dev python3-dev

$ sudo pip3 install pip --upgrade

$ sudo pip3 install keras_applications==1.0.8 --no-deps

$ sudo pip3 install keras_preprocessing==1.1.0 --no-deps

$ sudo pip3 install h5py==2.9.0

$ sudo pip3 install pybind11

$ pip3 install -U --user six wheel mock

$ sudo pip3 uninstall tensorflow

$ wget "https://raw.githubusercontent.com/PINTO0309/Tensorflow-bin/master/previous_versions/download_tensorflow-1.15.0-cp37-cp37m-linux_armv7l.sh"

$ ./download_tensorflow-1.15.0-cp37-cp37m-linux_armv7l.sh

$ sudo pip3 install tensorflow-1.15.0-cp37-cp37m-linux_armv7l.whlExample of Python 3.x + Tensorflow v2 series

##### Bullseye, Ubuntu22.04

sudo apt update && sudo apt upgrade -y && \

sudo apt install -y \

libhdf5-dev \

unzip \

pkg-config \

python3-pip \

cmake \

make \

git \

python-is-python3 \

wget \

patchelf && \

pip install -U pip && \

pip install numpy==1.26.2 && \

pip install keras_applications==1.0.8 --no-deps && \

pip install keras_preprocessing==1.1.2 --no-deps && \

pip install h5py==3.6.0 && \

pip install pybind11==2.9.2 && \

pip install packaging && \

pip install protobuf==3.20.3 && \

pip install six wheel mock gdown##### Bookworm

sudo apt update && sudo apt upgrade -y && \

sudo apt install -y \

libhdf5-dev \

unzip \

pkg-config \

python3-pip \

cmake \

make \

git \

python-is-python3 \

wget \

patchelf && \

pip install -U pip --break-system-packages && \

pip install numpy==1.26.2 --break-system-packages && \

pip install keras_applications==1.0.8 --no-deps --break-system-packages && \

pip install keras_preprocessing==1.1.2 --no-deps --break-system-packages && \

pip install h5py==3.10.0 --break-system-packages && \

pip install pybind11==2.9.2 --break-system-packages && \

pip install packaging --break-system-packages && \

pip install protobuf==3.20.3 --break-system-packages && \

pip install six wheel mock gdown --break-system-packagespip uninstall tensorflow

TFVER=2.15.0.post1

PYVER=39

or

PYVER=310

or

PYVER=311

ARCH=`python -c 'import platform; print(platform.machine())'`

echo CPU ARCH: ${ARCH}

pip install \

--no-cache-dir \

https://github.com/PINTO0309/Tensorflow-bin/releases/download/v${TFVER}/tensorflow-${TFVER}-cp${PYVER}-none-linux_${ARCH}.whlExample of Python 3.x series

$ python -c 'import tensorflow as tf;print(tf.__version__)'

2.15.0.post1Sample of MultiThread x4

- Preparation of test environment

$ cd ~;mkdir test

$ curl https://raw.githubusercontent.com/tensorflow/tensorflow/master/tensorflow/lite/examples/label_image/testdata/grace_hopper.bmp > ~/test/grace_hopper.bmp

$ curl https://storage.googleapis.com/download.tensorflow.org/models/mobilenet_v1_1.0_224_frozen.tgz | tar xzv -C ~/test mobilenet_v1_1.0_224/labels.txt

$ mv ~/test/mobilenet_v1_1.0_224/labels.txt ~/test/

$ curl http://download.tensorflow.org/models/mobilenet_v1_2018_02_22/mobilenet_v1_1.0_224_quant.tgz | tar xzv -C ~/test

$ cp tensorflow/tensorflow/contrib/lite/examples/python/label_image.py ~/test[Sample Code] label_image.py

import argparse

import numpy as np

import time

from PIL import Image

# Tensorflow -v1.12.0

#from tensorflow.contrib.lite.python import interpreter as interpreter_wrapper

# Tensorflow v1.13.0+, v2.x.x

from tensorflow.lite.python import interpreter as interpreter_wrapper

def load_labels(filename):

my_labels = []

input_file = open(filename, 'r')

for l in input_file:

my_labels.append(l.strip())

return my_labels

if __name__ == "__main__":

floating_model = False

parser = argparse.ArgumentParser()

parser.add_argument("-i", "--image", default="/tmp/grace_hopper.bmp", \

help="image to be classified")

parser.add_argument("-m", "--model_file", \

default="/tmp/mobilenet_v1_1.0_224_quant.tflite", \

help=".tflite model to be executed")

parser.add_argument("-l", "--label_file", default="/tmp/labels.txt", \

help="name of file containing labels")

parser.add_argument("--input_mean", default=127.5, help="input_mean")

parser.add_argument("--input_std", default=127.5, \

help="input standard deviation")

parser.add_argument("--num_threads", default=1, help="number of threads")

args = parser.parse_args()

### Tensorflow -v2.2.0

#interpreter = interpreter_wrapper.Interpreter(model_path=args.model_file)

### Tensorflow v2.3.0+

interpreter = interpreter_wrapper.Interpreter(model_path=args.model_file, num_threads=int(args.num_threads))

interpreter.allocate_tensors()

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

# check the type of the input tensor

if input_details[0]['dtype'] == np.float32:

floating_model = True

# NxHxWxC, H:1, W:2

height = input_details[0]['shape'][1]

width = input_details[0]['shape'][2]

img = Image.open(args.image)

img = img.resize((width, height))

# add N dim

input_data = np.expand_dims(img, axis=0)

if floating_model:

input_data = (np.float32(input_data) - args.input_mean) / args.input_std

### Tensorflow -v2.2.0

#interpreter.set_num_threads(int(args.num_threads))

interpreter.set_tensor(input_details[0]['index'], input_data)

start_time = time.time()

interpreter.invoke()

stop_time = time.time()

output_data = interpreter.get_tensor(output_details[0]['index'])

results = np.squeeze(output_data)

top_k = results.argsort()[-5:][::-1]

labels = load_labels(args.label_file)

for i in top_k:

if floating_model:

print('{0:08.6f}'.format(float(results[i]))+":", labels[i])

else:

print('{0:08.6f}'.format(float(results[i]/255.0))+":", labels[i])

print("time: ", stop_time - start_time)- Run test

$ cd ~/test

$ python3 label_image.py \

--num_threads 1 \

--image grace_hopper.bmp \

--model_file mobilenet_v1_1.0_224_quant.tflite \

--label_file labels.txt

0.415686: 653:military uniform

0.352941: 907:Windsor tie

0.058824: 668:mortarboard

0.035294: 458:bow tie, bow-tie, bowtie

0.035294: 835:suit, suit of clothes

time: 0.4152982234954834$ cd ~/test

$ python3 label_image.py \

--num_threads 4 \

--image grace_hopper.bmp \

--model_file mobilenet_v1_1.0_224_quant.tflite \

--label_file labels.txt

0.415686: 653:military uniform

0.352941: 907:Windsor tie

0.058824: 668:mortarboard

0.035294: 458:bow tie, bow-tie, bowtie

0.035294: 835:suit, suit of clothes

time: 0.1647195816040039Sample of MultiThread x4 - Real-time inference with a USB camera

- RaspberryPi4 (CPU only)

- Ubuntu 19.10 aarch64

- High resolution IPS 1080p display

- USB camera resolution 640x480

- Tensorflow Lite

- MobileNetV2-SSDLite (Pascal-VOC, Integer Quantization)

Tensorflow v1.11.0

============================================================

Tensorflow v1.11.0

============================================================

Python2.x - Bazel 0.17.2

$ sudo apt-get install -y openmpi-bin libopenmpi-dev libhdf5-dev

$ cd ~

$ git clone https://github.com/tensorflow/tensorflow.git

$ cd tensorflow

$ git checkout -b v1.11.0

$ ./configure

Please specify the location of python. [Default is /usr/bin/python]:

Found possible Python library paths:

/usr/local/lib/python2.7/dist-packages

/usr/local/lib

/home/pi/tensorflow/tensorflow/contrib/lite/tools/make/gen/rpi_armv7l/lib

/usr/lib/python2.7/dist-packages

/opt/movidius/caffe/python

Please input the desired Python library path to use. Default is [/usr/local/lib/python2.7/dist-packages]

Do you wish to build TensorFlow with jemalloc as malloc support? [Y/n]: y

No jemalloc as malloc support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Google Cloud Platform support? [Y/n]: n

No Google Cloud Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Hadoop File System support? [Y/n]: n

No Hadoop File System support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Amazon AWS Platform support? [Y/n]: n

No Amazon AWS Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Apache Kafka Platform support? [Y/n]: n

No Apache Kafka Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with XLA JIT support? [y/N]: n

No XLA JIT support will be enabled for TensorFlow.

Do you wish to build TensorFlow with GDR support? [y/N]: n

No GDR support will be enabled for TensorFlow.

Do you wish to build TensorFlow with VERBS support? [y/N]: n

No VERBS support will be enabled for TensorFlow.

Do you wish to build TensorFlow with nGraph support? [y/N]: n

No nGraph support will be enabled for TensorFlow.

Do you wish to build TensorFlow with OpenCL SYCL support? [y/N]: n

No OpenCL SYCL support will be enabled for TensorFlow.

Do you wish to build TensorFlow with CUDA support? [y/N]: n

No CUDA support will be enabled for TensorFlow.

Do you wish to download a fresh release of clang? (Experimental) [y/N]: n

Clang will not be downloaded.

Do you wish to build TensorFlow with MPI support? [y/N]: n

No MPI support will be enabled for TensorFlow.

Please specify optimization flags to use during compilation when bazel option "--config=opt" is specified [Default is -march=native]:

Would you like to interactively configure ./WORKSPACE for Android builds? [y/N]: n$ sudo bazel build --config opt --local_resources 1024.0,0.5,0.5 \

--copt=-mfpu=neon-vfpv4 \

--copt=-ftree-vectorize \

--copt=-funsafe-math-optimizations \

--copt=-ftree-loop-vectorize \

--copt=-fomit-frame-pointer \

--copt=-DRASPBERRY_PI \

--host_copt=-DRASPBERRY_PI \

//tensorflow/tools/pip_package:build_pip_package$ sudo ./bazel-bin/tensorflow/tools/pip_package/build_pip_package /tmp/tensorflow_pkg

$ sudo pip2 install /tmp/tensorflow_pkg/tensorflow-1.11.0-cp27-cp27mu-linux_armv7l.whlPython3.x- Bazel 0.17.2 + ZRAM + PythonAPI(MultiThread) Feb 23, 2019, Compilation work completed

$ sudo nano /etc/dphys-swapfile

CONF_SWAPFILE=2048

CONF_MAXSWAP=2048

$ sudo systemctl stop dphys-swapfile

$ sudo systemctl start dphys-swapfile

$ wget https://github.com/PINTO0309/Tensorflow-bin/raw/master/zram.sh

$ chmod 755 zram.sh

$ sudo mv zram.sh /etc/init.d/

$ sudo update-rc.d zram.sh defaults

$ sudo reboot

$ sudo apt-get install -y libhdf5-dev libc-ares-dev libeigen3-dev

$ sudo pip3 install keras_applications==1.0.7 --no-deps

$ sudo pip3 install keras_preprocessing==1.0.9 --no-deps

$ sudo pip3 install h5py==2.9.0

$ sudo apt-get install -y openmpi-bin libopenmpi-dev

$ cd ~

$ git clone https://github.com/tensorflow/tensorflow.git

$ cd tensorflow

$ git checkout -b v1.11.0Modify the program with reference to the following.

tensorflow/contrib/lite/examples/python/label_image.py

import argparse

import numpy as np

import time

from PIL import Image

from tensorflow.contrib.lite.python import interpreter as interpreter_wrapper

def load_labels(filename):

my_labels = []

input_file = open(filename, 'r')

for l in input_file:

my_labels.append(l.strip())

return my_labels

if __name__ == "__main__":

floating_model = False

parser = argparse.ArgumentParser()

parser.add_argument("-i", "--image", default="/tmp/grace_hopper.bmp", \

help="image to be classified")

parser.add_argument("-m", "--model_file", \

default="/tmp/mobilenet_v1_1.0_224_quant.tflite", \

help=".tflite model to be executed")

parser.add_argument("-l", "--label_file", default="/tmp/labels.txt", \

help="name of file containing labels")

parser.add_argument("--input_mean", default=127.5, help="input_mean")

parser.add_argument("--input_std", default=127.5, \

help="input standard deviation")

parser.add_argument("--num_threads", default=1, help="number of threads")

args = parser.parse_args()

interpreter = interpreter_wrapper.Interpreter(model_path=args.model_file)

interpreter.allocate_tensors()

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

# check the type of the input tensor

if input_details[0]['dtype'] == np.float32:

floating_model = True

# NxHxWxC, H:1, W:2

height = input_details[0]['shape'][1]

width = input_details[0]['shape'][2]

img = Image.open(args.image)

img = img.resize((width, height))

# add N dim

input_data = np.expand_dims(img, axis=0)

if floating_model:

input_data = (np.float32(input_data) - args.input_mean) / args.input_std

interpreter.set_num_threads(int(args.num_threads))

interpreter.set_tensor(input_details[0]['index'], input_data)

start_time = time.time()

interpreter.invoke()

stop_time = time.time()

output_data = interpreter.get_tensor(output_details[0]['index'])

results = np.squeeze(output_data)

top_k = results.argsort()[-5:][::-1]

labels = load_labels(args.label_file)

for i in top_k:

if floating_model:

print('{0:08.6f}'.format(float(results[i]))+":", labels[i])

else:

print('{0:08.6f}'.format(float(results[i]/255.0))+":", labels[i])

print("time: ", stop_time - start_time)tensorflow/contrib/lite/python/interpreter.py

#Add the following two lines to the last line

def set_num_threads(self, i):

return self._interpreter.SetNumThreads(i)tensorflow/contrib/lite/python/interpreter_wrapper/interpreter_wrapper.cc

//Corrected the vicinity of the last line as follows

PyObject* InterpreterWrapper::ResetVariableTensors() {

TFLITE_PY_ENSURE_VALID_INTERPRETER();

TFLITE_PY_CHECK(interpreter_->ResetVariableTensors());

Py_RETURN_NONE;

}

PyObject* InterpreterWrapper::SetNumThreads(int i) {

interpreter_->SetNumThreads(i);

Py_RETURN_NONE;

}

} // namespace interpreter_wrapper

} // namespace tflitetensorflow/contrib/lite/python/interpreter_wrapper/interpreter_wrapper.h

//Modified the middle of the logic as follows

// should be the interpreter object providing the memory.

PyObject* tensor(PyObject* base_object, int i);

PyObject* SetNumThreads(int i);

private:

// Helper function to construct an `InterpreterWrapper` object.

// It only returns InterpreterWrapper if it can construct an `Interpreter`.$ ./configure

Please specify the location of python. [Default is /usr/bin/python]: /usr/bin/python3

Found possible Python library paths:

/usr/local/lib

/usr/lib/python3/dist-packages

/usr/local/lib/python3.5/dist-packages

/opt/movidius/caffe/python

Please input the desired Python library path to use. Default is [/usr/local/lib] /usr/local/lib/python3.5/dist-packages

Do you wish to build TensorFlow with jemalloc as malloc support? [Y/n]: y

No jemalloc as malloc support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Google Cloud Platform support? [Y/n]: n

No Google Cloud Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Hadoop File System support? [Y/n]: n

No Hadoop File System support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Amazon AWS Platform support? [Y/n]: n

No Amazon AWS Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Apache Kafka Platform support? [Y/n]: n

No Apache Kafka Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with XLA JIT support? [y/N]: n

No XLA JIT support will be enabled for TensorFlow.

Do you wish to build TensorFlow with GDR support? [y/N]: n

No GDR support will be enabled for TensorFlow.

Do you wish to build TensorFlow with VERBS support? [y/N]: n

No VERBS support will be enabled for TensorFlow.

Do you wish to build TensorFlow with nGraph support? [y/N]: n

No nGraph support will be enabled for TensorFlow.

Do you wish to build TensorFlow with OpenCL SYCL support? [y/N]: n

No OpenCL SYCL support will be enabled for TensorFlow.

Do you wish to build TensorFlow with CUDA support? [y/N]: n

No CUDA support will be enabled for TensorFlow.

Do you wish to download a fresh release of clang? (Experimental) [y/N]: n

Clang will not be downloaded.

Do you wish to build TensorFlow with MPI support? [y/N]: n

No MPI support will be enabled for TensorFlow.

Please specify optimization flags to use during compilation when bazel option "--config=opt" is specified [Default is -march=native]:

Would you like to interactively configure ./WORKSPACE for Android builds? [y/N]: n

Not configuring the WORKSPACE for Android builds.

Preconfigured Bazel build configs. You can use any of the below by adding "--config=<>" to your build command. See tools/bazel.rc for more details.

--config=mkl # Build with MKL support.

--config=monolithic # Config for mostly static monolithic build.

Configuration finished$ sudo bazel build --config opt --local_resources 1024.0,0.5,0.5 \

--copt=-mfpu=neon-vfpv4 \

--copt=-ftree-vectorize \

--copt=-funsafe-math-optimizations \

--copt=-ftree-loop-vectorize \

--copt=-fomit-frame-pointer \

--copt=-DRASPBERRY_PI \

--host_copt=-DRASPBERRY_PI \

//tensorflow/tools/pip_package:build_pip_package$ sudo -s

# ./bazel-bin/tensorflow/tools/pip_package/build_pip_package /tmp/tensorflow_pkg

# exit

$ sudo pip3 install /tmp/tensorflow_pkg/tensorflow-1.11.0-cp35-cp35m-linux_armv7l.whlPython3.x + jemalloc + MPI + MultiThread [C++ Only]

Edit tensorflow/tensorflow/contrib/mpi/mpi_rendezvous_mgr.cc Line139 / Line140, Line261.

MPIRendezvousMgr* mgr =

reinterpret_cast<MPIRendezvousMgr*>(this->rendezvous_mgr_);

- mgr->QueueRequest(parsed.FullKey().ToString(), step_id_,

- std::move(request_call), rendezvous_call);

+ mgr->QueueRequest(string(parsed.FullKey()), step_id_, std::move(request_call),

+ rendezvous_call);

}

MPIRemoteRendezvous::~MPIRemoteRendezvous() {}

std::function<MPISendTensorCall*()> res = std::bind(

send_cb, status, send_args, recv_args, val, is_dead, mpi_send_call);

- SendQueueEntry req(parsed.FullKey().ToString().c_str(), std::move(res));

+ SendQueueEntry req(string(parsed.FullKey()), std::move(res));

this->QueueSendRequest(req);Edit tensorflow/tensorflow/contrib/mpi/mpi_rendezvous_mgr.h Line74

void Init(const Rendezvous::ParsedKey& parsed, const int64 step_id,

const bool is_dead) {

- mRes_.set_key(parsed.FullKey().ToString());

+ mRes_.set_key(string(parsed.FullKey()));

mRes_.set_step_id(step_id);

mRes_.mutable_response()->set_is_dead(is_dead);

mRes_.mutable_response()->set_send_start_micros(Edit tensorflow/tensorflow/contrib/lite/interpreter.cc Line127.

- context_.recommended_num_threads = -1;

+ context_.recommended_num_threads = 4;$ sudo apt-get install -y libhdf5-dev

$ sudo pip3 install keras_applications==1.0.4 --no-deps

$ sudo pip3 install keras_preprocessing==1.0.2 --no-deps

$ sudo pip3 install h5py==2.8.0

$ sudo apt-get install -y openmpi-bin libopenmpi-dev

$ cd ~

$ git clone https://github.com/tensorflow/tensorflow.git

$ cd tensorflow

$ git checkout -b v1.11.0

$ ./configure

Please specify the location of python. [Default is /usr/bin/python]: /usr/bin/python3

Found possible Python library paths:

/usr/local/lib

/usr/lib/python3/dist-packages

/usr/local/lib/python3.5/dist-packages

/opt/movidius/caffe/python

Please input the desired Python library path to use. Default is [/usr/local/lib] /usr/local/lib/python3.5/dist-packages

Do you wish to build TensorFlow with jemalloc as malloc support? [Y/n]: y

jemalloc as malloc support will be enabled for Tensorflow.

Do you wish to build TensorFlow with Google Cloud Platform support? [Y/n]: n

No Google Cloud Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Hadoop File System support? [Y/n]: n

No Hadoop File System support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Amazon AWS Platform support? [Y/n]: n

No Amazon AWS Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Apache Kafka Platform support? [Y/n]: n

No Apache Kafka Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with XLA JIT support? [y/N]: n

No XLA JIT support will be enabled for TensorFlow.

Do you wish to build TensorFlow with GDR support? [y/N]: n

No GDR support will be enabled for TensorFlow.

Do you wish to build TensorFlow with VERBS support? [y/N]: n

No VERBS support will be enabled for TensorFlow.

Do you wish to build TensorFlow with nGraph support? [y/N]: n

No nGraph support will be enabled for TensorFlow.

Do you wish to build TensorFlow with OpenCL SYCL support? [y/N]: n

No OpenCL SYCL support will be enabled for TensorFlow.

Do you wish to build TensorFlow with CUDA support? [y/N]: n

No CUDA support will be enabled for TensorFlow.

Do you wish to download a fresh release of clang? (Experimental) [y/N]: n

Clang will not be downloaded.

Do you wish to build TensorFlow with MPI support? [y/N]: y

MPI support will be enabled for Tensorflow.

Please specify the MPI toolkit folder. [Default is /usr]: /usr/lib/arm-linux-gnueabihf/openmpi

Please specify optimization flags to use during compilation when bazel option "--config=opt" is specified [Default is -march=native]:

Would you like to interactively configure ./WORKSPACE for Android builds? [y/N]: n

Not configuring the WORKSPACE for Android builds.

Preconfigured Bazel build configs. You can use any of the below by adding "--config=<>" to your build command. See tools/bazel.rc for more details.

--config=mkl # Build with MKL support.

--config=monolithic # Config for mostly static monolithic build.

Configuration finished$ sudo bazel build --config opt --local_resources 1024.0,0.5,0.5 \

--copt=-mfpu=neon-vfpv4 \

--copt=-ftree-vectorize \

--copt=-funsafe-math-optimizations \

--copt=-ftree-loop-vectorize \

--copt=-fomit-frame-pointer \

--copt=-DRASPBERRY_PI \

--host_copt=-DRASPBERRY_PI \

//tensorflow/tools/pip_package:build_pip_packagePython3.x + jemalloc + XLA JIT (Build impossible)

$ sudo apt-get install -y libhdf5-dev

$ sudo pip3 install keras_applications==1.0.4 --no-deps

$ sudo pip3 install keras_preprocessing==1.0.2 --no-deps

$ sudo pip3 install h5py==2.8.0

$ sudo apt-get install -y openmpi-bin libopenmpi-dev

$ JAVA_OPTIONS=-Xmx256M

$ cd ~

$ git clone https://github.com/tensorflow/tensorflow.git

$ cd tensorflow

$ git checkout -b v1.11.0

$ ./configure

Please specify the location of python. [Default is /usr/bin/python]: /usr/bin/python3

Found possible Python library paths:

/usr/local/lib

/usr/lib/python3/dist-packages

/usr/local/lib/python3.5/dist-packages

/opt/movidius/caffe/python

Please input the desired Python library path to use. Default is [/usr/local/lib] /usr/local/lib/python3.5/dist-packages

Do you wish to build TensorFlow with jemalloc as malloc support? [Y/n]: y

jemalloc as malloc support will be enabled for Tensorflow.

Do you wish to build TensorFlow with Google Cloud Platform support? [Y/n]: n

No Google Cloud Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Hadoop File System support? [Y/n]: n

No Hadoop File System support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Amazon AWS Platform support? [Y/n]: n

No Amazon AWS Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with Apache Kafka Platform support? [Y/n]: n

No Apache Kafka Platform support will be enabled for TensorFlow.

Do you wish to build TensorFlow with XLA JIT support? [y/N]: y

No XLA JIT support will be enabled for TensorFlow.

Do you wish to build TensorFlow with GDR support? [y/N]: n

No GDR support will be enabled for TensorFlow.

Do you wish to build TensorFlow with VERBS support? [y/N]: n

No VERBS support will be enabled for TensorFlow.

Do you wish to build TensorFlow with nGraph support? [y/N]: n

No nGraph support will be enabled for TensorFlow.

Do you wish to build TensorFlow with OpenCL SYCL support? [y/N]: n

No OpenCL SYCL support will be enabled for TensorFlow.

Do you wish to build TensorFlow with CUDA support? [y/N]: n

No CUDA support will be enabled for TensorFlow.

Do you wish to download a fresh release of clang? (Experimental) [y/N]: n

Clang will not be downloaded.

Do you wish to build TensorFlow with MPI support? [y/N]: n

MPI support will be enabled for Tensorflow.

Please specify optimization flags to use during compilation when bazel option "--config=opt" is specified [Default is -march=native]:

Would you like to interactively configure ./WORKSPACE for Android builds? [y/N]: n

Not configuring the WORKSPACE for Android builds.

Preconfigured Bazel build configs. You can use any of the below by adding "--config=<>" to your build command. See tools/bazel.rc for more details.

--config=mkl # Build with MKL support.

--config=monolithic # Config for mostly static monolithic build.

Configuration finished$ sudo bazel build --config opt --local_resources 1024.0,0.5,0.5 \

--copt=-mfpu=neon-vfpv4 \

--copt=-ftree-vectorize \

--copt=-funsafe-math-optimizations \

--copt=-ftree-loop-vectorize \

--copt=-fomit-frame-pointer \

--copt=-DRASPBERRY_PI \

--host_copt=-DRASPBERRY_PI \

//tensorflow/tools/pip_package:build_pip_packagePython3.x + TX2 aarch64 - Bazel 0.18.1 (JetPack-L4T-3.3-linux-x64_b39)

- L4T R28.2.1(TX2 / TX2i)

- L4T R28.2(TX1)

- CUDA 9.0

- cuDNN 7.1.5

- TensorRT 4.0

- VisionWorks 1.6

tensorflow/tensorflow#21574 (comment) tensorflow/serving#832 https://docs.nvidia.com/deeplearning/sdk/nccl-archived/nccl_2213/nccl-install-guide/index.html

build --action_env PYTHON_BIN_PATH="/usr/bin/python3"

build --action_env PYTHON_LIB_PATH="/usr/local/lib/python3.5/dist-packages"

build --python_path="/usr/bin/python3"

build --define with_jemalloc=true

build:gcp --define with_gcp_support=true

build:hdfs --define with_hdfs_support=true

build:aws --define with_aws_support=true

build:kafka --define with_kafka_support=true

build:xla --define with_xla_support=true

build:gdr --define with_gdr_support=true

build:verbs --define with_verbs_support=true

build:ngraph --define with_ngraph_support=true

build --action_env TF_NEED_OPENCL_SYCL="0"

build --action_env TF_NEED_CUDA="1"

build --action_env CUDA_TOOLKIT_PATH="/usr/local/cuda-9.0"

build --action_env TF_CUDA_VERSION="9.0"

build --action_env CUDNN_INSTALL_PATH="/usr/lib/aarch64-linux-gnu"

build --action_env TF_CUDNN_VERSION="7"

build --action_env NCCL_INSTALL_PATH="/usr/local"

build --action_env TF_NCCL_VERSION="2"

build --action_env TF_CUDA_COMPUTE_CAPABILITIES="3.5,7.0"

build --action_env LD_LIBRARY_PATH="/usr/local/cuda-9.0/lib64:../src/.libs"

build --action_env TF_CUDA_CLANG="0"

build --action_env GCC_HOST_COMPILER_PATH="/usr/bin/gcc"

build --config=cuda

test --config=cuda

build --define grpc_no_ares=true

build:opt --copt=-march=native

build:opt --host_copt=-march=native

build:opt --define with_default_optimizations=true

$ sudo apt-get install -y libhdf5-dev

$ sudo pip3 install keras_applications==1.0.4 --no-deps

$ sudo pip3 install keras_preprocessing==1.0.2 --no-deps

$ sudo pip3 install h5py==2.8.0

$ sudo apt-get install -y openmpi-bin libopenmpi-dev

$ bazel build -c opt --config=cuda --local_resources 3072.0,4.0,1.0 --verbose_failures //tensorflow/tools/pip_package:build_pip_packageTensorflow v1.12.0

============================================================

Tensorflow v1.12.0 - Bazel 0.18.1

============================================================

Python3.x (Nov 15, 2018 Under construction)

- tensorflow/BUILD

config_setting(

name = "no_aws_support",

define_values = {"no_aws_support": "false"},

visibility = ["//visibility:public"],

)

config_setting(

name = "no_gcp_support",

define_values = {"no_gcp_support": "false"},

visibility = ["//visibility:public"],

)

config_setting(

name = "no_hdfs_support",

define_values = {"no_hdfs_support": "false"},

visibility = ["//visibility:public"],

)

config_setting(

name = "no_ignite_support",

define_values = {"no_ignite_support": "false"},

visibility = ["//visibility:public"],

)

config_setting(

name = "no_kafka_support",

define_values = {"no_kafka_support": "false"},

visibility = ["//visibility:public"],

)

- bazel.rc

# Options to disable default on features

build:noaws --define=no_aws_support=true

build:nogcp --define=no_gcp_support=true

build:nohdfs --define=no_hdfs_support=true

build:nokafka --define=no_kafka_support=true

build:noignite --define=no_ignite_support=true

- configure.py

#set_build_var(environ_cp, 'TF_NEED_IGNITE', 'Apache Ignite',

# 'with_ignite_support', True, 'ignite')

## On Windows, we don't have MKL support and the build is always monolithic.

## So no need to print the following message.

## TODO(pcloudy): remove the following if check when they make sense on Windows

#if not is_windows():

# print('Preconfigured Bazel build configs. You can use any of the below by '

# 'adding "--config=<>" to your build command. See tools/bazel.rc for '

# 'more details.')

# config_info_line('mkl', 'Build with MKL support.')

# config_info_line('monolithic', 'Config for mostly static monolithic build.')

# config_info_line('gdr', 'Build with GDR support.')

# config_info_line('verbs', 'Build with libverbs support.')

# config_info_line('ngraph', 'Build with Intel nGraph support.')

print('Preconfigured Bazel build configs. You can use any of the below by '

'adding "--config=<>" to your build command. See .bazelrc for more '

'details.')

config_info_line('mkl', 'Build with MKL support.')

config_info_line('monolithic', 'Config for mostly static monolithic build.')

config_info_line('gdr', 'Build with GDR support.')

config_info_line('verbs', 'Build with libverbs support.')

config_info_line('ngraph', 'Build with Intel nGraph support.')

print('Preconfigured Bazel build configs to DISABLE default on features:')

config_info_line('noaws', 'Disable AWS S3 filesystem support.')

config_info_line('nogcp', 'Disable GCP support.')

config_info_line('nohdfs', 'Disable HDFS support.')

config_info_line('noignite', 'Disable Apacha Ignite support.')

config_info_line('nokafka', 'Disable Apache Kafka support.')# Description:

# contains parts of TensorFlow that are experimental or unstable and which are not supported.

licenses(["notice"]) # Apache 2.0

package(default_visibility = ["//tensorflow:__subpackages__"])

load("//third_party/mpi:mpi.bzl", "if_mpi")

load("@local_config_cuda//cuda:build_defs.bzl", "if_cuda")

load("//tensorflow:tensorflow.bzl", "if_not_windows")

load("//tensorflow:tensorflow.bzl", "if_not_windows_cuda")

py_library(

name = "contrib_py",

srcs = glob(

["**/*.py"],

exclude = [

"**/*_test.py",

],

),

srcs_version = "PY2AND3",

visibility = ["//visibility:public"],

deps = [

"//tensorflow/contrib/all_reduce",

"//tensorflow/contrib/batching:batch_py",

"//tensorflow/contrib/bayesflow:bayesflow_py",

"//tensorflow/contrib/boosted_trees:init_py",

"//tensorflow/contrib/checkpoint/python:checkpoint",

"//tensorflow/contrib/cluster_resolver:cluster_resolver_py",

"//tensorflow/contrib/coder:coder_py",

"//tensorflow/contrib/compiler:compiler_py",

"//tensorflow/contrib/compiler:xla",

"//tensorflow/contrib/autograph",

"//tensorflow/contrib/constrained_optimization",

"//tensorflow/contrib/copy_graph:copy_graph_py",

"//tensorflow/contrib/crf:crf_py",

"//tensorflow/contrib/cudnn_rnn:cudnn_rnn_py",

"//tensorflow/contrib/data",

"//tensorflow/contrib/deprecated:deprecated_py",

"//tensorflow/contrib/distribute:distribute",

"//tensorflow/contrib/distributions:distributions_py",

"//tensorflow/contrib/eager/python:tfe",

"//tensorflow/contrib/estimator:estimator_py",

"//tensorflow/contrib/factorization:factorization_py",

"//tensorflow/contrib/feature_column:feature_column_py",

"//tensorflow/contrib/framework:framework_py",

"//tensorflow/contrib/gan",

"//tensorflow/contrib/graph_editor:graph_editor_py",

"//tensorflow/contrib/grid_rnn:grid_rnn_py",

"//tensorflow/contrib/hadoop",

"//tensorflow/contrib/hooks",

"//tensorflow/contrib/image:distort_image_py",

"//tensorflow/contrib/image:image_py",

"//tensorflow/contrib/image:single_image_random_dot_stereograms_py",

"//tensorflow/contrib/input_pipeline:input_pipeline_py",

"//tensorflow/contrib/integrate:integrate_py",

"//tensorflow/contrib/keras",

"//tensorflow/contrib/kernel_methods",

"//tensorflow/contrib/labeled_tensor",

"//tensorflow/contrib/layers:layers_py",

"//tensorflow/contrib/learn",

"//tensorflow/contrib/legacy_seq2seq:seq2seq_py",

"//tensorflow/contrib/libsvm",

"//tensorflow/contrib/linear_optimizer:sdca_estimator_py",

"//tensorflow/contrib/linear_optimizer:sdca_ops_py",

"//tensorflow/contrib/lite/python:lite",

"//tensorflow/contrib/lookup:lookup_py",

"//tensorflow/contrib/losses:losses_py",

"//tensorflow/contrib/losses:metric_learning_py",

"//tensorflow/contrib/memory_stats:memory_stats_py",

"//tensorflow/contrib/meta_graph_transform",

"//tensorflow/contrib/metrics:metrics_py",

"//tensorflow/contrib/mixed_precision:mixed_precision",

"//tensorflow/contrib/model_pruning",

"//tensorflow/contrib/nccl:nccl_py",

"//tensorflow/contrib/nearest_neighbor:nearest_neighbor_py",

"//tensorflow/contrib/nn:nn_py",

"//tensorflow/contrib/opt:opt_py",

"//tensorflow/contrib/optimizer_v2:optimizer_v2_py",

"//tensorflow/contrib/periodic_resample:init_py",

"//tensorflow/contrib/predictor",

"//tensorflow/contrib/proto",

"//tensorflow/contrib/quantization:quantization_py",

"//tensorflow/contrib/quantize:quantize_graph",

"//tensorflow/contrib/receptive_field:receptive_field_py",

"//tensorflow/contrib/recurrent:recurrent_py",

"//tensorflow/contrib/reduce_slice_ops:reduce_slice_ops_py",

"//tensorflow/contrib/remote_fused_graph/pylib:remote_fused_graph_ops_py",

"//tensorflow/contrib/resampler:resampler_py",

"//tensorflow/contrib/rnn:rnn_py",

"//tensorflow/contrib/rpc",

"//tensorflow/contrib/saved_model:saved_model_py",

"//tensorflow/contrib/seq2seq:seq2seq_py",

"//tensorflow/contrib/signal:signal_py",

"//tensorflow/contrib/slim",

"//tensorflow/contrib/slim:nets",

"//tensorflow/contrib/solvers:solvers_py",

"//tensorflow/contrib/sparsemax:sparsemax_py",

"//tensorflow/contrib/specs",

"//tensorflow/contrib/staging",

"//tensorflow/contrib/stat_summarizer:stat_summarizer_py",

"//tensorflow/contrib/stateless",

"//tensorflow/contrib/summary:summary",

"//tensorflow/contrib/tensor_forest:init_py",

"//tensorflow/contrib/tensorboard",

"//tensorflow/contrib/testing:testing_py",

"//tensorflow/contrib/text:text_py",

"//tensorflow/contrib/tfprof",

"//tensorflow/contrib/timeseries",

"//tensorflow/contrib/tpu",

"//tensorflow/contrib/training:training_py",

"//tensorflow/contrib/util:util_py",

"//tensorflow/python:util",

"//tensorflow/python/estimator:estimator_py",

] + if_mpi(["//tensorflow/contrib/mpi_collectives:mpi_collectives_py"]) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_kafka_support": [],

"//conditions:default": [

"//tensorflow/contrib/kafka",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"//tensorflow/contrib/kinesis",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//conditions:default": [

"//tensorflow/contrib/fused_conv:fused_conv_py",

"//tensorflow/contrib/tensorrt:init_py",

"//tensorflow/contrib/ffmpeg:ffmpeg_ops_py",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_gcp_support": [],

"//conditions:default": [

"//tensorflow/contrib/bigtable",

"//tensorflow/contrib/cloud:cloud_py",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_ignite_support": [],

"//conditions:default": [

"//tensorflow/contrib/ignite",

],

}),

)

cc_library(

name = "contrib_kernels",

visibility = ["//visibility:public"],

deps = [

"//tensorflow/contrib/boosted_trees:boosted_trees_kernels",

"//tensorflow/contrib/coder:all_kernels",

"//tensorflow/contrib/factorization/kernels:all_kernels",

"//tensorflow/contrib/hadoop:dataset_kernels",

"//tensorflow/contrib/input_pipeline:input_pipeline_ops_kernels",

"//tensorflow/contrib/layers:sparse_feature_cross_op_kernel",

"//tensorflow/contrib/nearest_neighbor:nearest_neighbor_ops_kernels",

"//tensorflow/contrib/rnn:all_kernels",

"//tensorflow/contrib/seq2seq:beam_search_ops_kernels",

"//tensorflow/contrib/tensor_forest:model_ops_kernels",

"//tensorflow/contrib/tensor_forest:stats_ops_kernels",

"//tensorflow/contrib/tensor_forest:tensor_forest_kernels",

"//tensorflow/contrib/text:all_kernels",

] + if_mpi(["//tensorflow/contrib/mpi_collectives:mpi_collectives_py"]) + if_cuda([

"//tensorflow/contrib/nccl:nccl_kernels",

]) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_kafka_support": [],

"//conditions:default": [

"//tensorflow/contrib/kafka:dataset_kernels",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"//tensorflow/contrib/kinesis:dataset_kernels",

],

}) + if_not_windows([

"//tensorflow/contrib/tensorrt:trt_engine_op_kernel",

]),

)

cc_library(

name = "contrib_ops_op_lib",

visibility = ["//visibility:public"],

deps = [

"//tensorflow/contrib/boosted_trees:boosted_trees_ops_op_lib",

"//tensorflow/contrib/coder:all_ops",

"//tensorflow/contrib/factorization:all_ops",

"//tensorflow/contrib/framework:all_ops",

"//tensorflow/contrib/hadoop:dataset_ops_op_lib",

"//tensorflow/contrib/input_pipeline:input_pipeline_ops_op_lib",

"//tensorflow/contrib/layers:sparse_feature_cross_op_op_lib",

"//tensorflow/contrib/nccl:nccl_ops_op_lib",

"//tensorflow/contrib/nearest_neighbor:nearest_neighbor_ops_op_lib",

"//tensorflow/contrib/rnn:all_ops",

"//tensorflow/contrib/seq2seq:beam_search_ops_op_lib",

"//tensorflow/contrib/tensor_forest:model_ops_op_lib",

"//tensorflow/contrib/tensor_forest:stats_ops_op_lib",

"//tensorflow/contrib/tensor_forest:tensor_forest_ops_op_lib",

"//tensorflow/contrib/text:all_ops",

"//tensorflow/contrib/tpu:all_ops",

] + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_kafka_support": [],

"//conditions:default": [

"//tensorflow/contrib/kafka:dataset_ops_op_lib",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"//tensorflow/contrib/kinesis:dataset_ops_op_lib",

],

}) + if_not_windows([

"//tensorflow/contrib/tensorrt:trt_engine_op_op_lib",

]) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_ignite_support": [],

"//conditions:default": [

"//tensorflow/contrib/ignite:dataset_ops_op_lib",

],

}),

)

- tensorflow/core/platform/default/build_config.bzl

# Platform-specific build configurations.

load("@protobuf_archive//:protobuf.bzl", "proto_gen")

load("//tensorflow:tensorflow.bzl", "if_not_mobile")

load("//tensorflow:tensorflow.bzl", "if_windows")

load("//tensorflow:tensorflow.bzl", "if_not_windows")

load("//tensorflow/core:platform/default/build_config_root.bzl", "if_static")

load("@local_config_cuda//cuda:build_defs.bzl", "if_cuda")

load(

"//third_party/mkl:build_defs.bzl",

"if_mkl_ml",

)

# Appends a suffix to a list of deps.

def tf_deps(deps, suffix):

tf_deps = []

# If the package name is in shorthand form (ie: does not contain a ':'),

# expand it to the full name.

for dep in deps:

tf_dep = dep

if not ":" in dep:

dep_pieces = dep.split("/")

tf_dep += ":" + dep_pieces[len(dep_pieces) - 1]

tf_deps += [tf_dep + suffix]

return tf_deps

# Modified from @cython//:Tools/rules.bzl

def pyx_library(

name,

deps = [],

py_deps = [],

srcs = [],

**kwargs):

"""Compiles a group of .pyx / .pxd / .py files.

First runs Cython to create .cpp files for each input .pyx or .py + .pxd

pair. Then builds a shared object for each, passing "deps" to each cc_binary

rule (includes Python headers by default). Finally, creates a py_library rule

with the shared objects and any pure Python "srcs", with py_deps as its

dependencies; the shared objects can be imported like normal Python files.

Args:

name: Name for the rule.

deps: C/C++ dependencies of the Cython (e.g. Numpy headers).

py_deps: Pure Python dependencies of the final library.

srcs: .py, .pyx, or .pxd files to either compile or pass through.

**kwargs: Extra keyword arguments passed to the py_library.

"""

# First filter out files that should be run compiled vs. passed through.

py_srcs = []

pyx_srcs = []

pxd_srcs = []

for src in srcs:

if src.endswith(".pyx") or (src.endswith(".py") and

src[:-3] + ".pxd" in srcs):

pyx_srcs.append(src)

elif src.endswith(".py"):

py_srcs.append(src)

else:

pxd_srcs.append(src)

if src.endswith("__init__.py"):

pxd_srcs.append(src)

# Invoke cython to produce the shared object libraries.

for filename in pyx_srcs:

native.genrule(

name = filename + "_cython_translation",

srcs = [filename],

outs = [filename.split(".")[0] + ".cpp"],

# Optionally use PYTHON_BIN_PATH on Linux platforms so that python 3

# works. Windows has issues with cython_binary so skip PYTHON_BIN_PATH.

cmd = "PYTHONHASHSEED=0 $(location @cython//:cython_binary) --cplus $(SRCS) --output-file $(OUTS)",

tools = ["@cython//:cython_binary"] + pxd_srcs,

)

shared_objects = []

for src in pyx_srcs:

stem = src.split(".")[0]

shared_object_name = stem + ".so"

native.cc_binary(

name = shared_object_name,

srcs = [stem + ".cpp"],

deps = deps + ["//third_party/python_runtime:headers"],

linkshared = 1,

)

shared_objects.append(shared_object_name)

# Now create a py_library with these shared objects as data.

native.py_library(

name = name,

srcs = py_srcs,

deps = py_deps,

srcs_version = "PY2AND3",

data = shared_objects,

**kwargs

)

def _proto_cc_hdrs(srcs, use_grpc_plugin = False):

ret = [s[:-len(".proto")] + ".pb.h" for s in srcs]

if use_grpc_plugin:

ret += [s[:-len(".proto")] + ".grpc.pb.h" for s in srcs]

return ret

def _proto_cc_srcs(srcs, use_grpc_plugin = False):

ret = [s[:-len(".proto")] + ".pb.cc" for s in srcs]

if use_grpc_plugin:

ret += [s[:-len(".proto")] + ".grpc.pb.cc" for s in srcs]

return ret

def _proto_py_outs(srcs, use_grpc_plugin = False):

ret = [s[:-len(".proto")] + "_pb2.py" for s in srcs]

if use_grpc_plugin:

ret += [s[:-len(".proto")] + "_pb2_grpc.py" for s in srcs]

return ret

# Re-defined protocol buffer rule to allow building "header only" protocol

# buffers, to avoid duplicate registrations. Also allows non-iterable cc_libs

# containing select() statements.

def cc_proto_library(

name,

srcs = [],

deps = [],

cc_libs = [],

include = None,

protoc = "@protobuf_archive//:protoc",

internal_bootstrap_hack = False,

use_grpc_plugin = False,

use_grpc_namespace = False,

default_header = False,

**kargs):

"""Bazel rule to create a C++ protobuf library from proto source files.

Args:

name: the name of the cc_proto_library.

srcs: the .proto files of the cc_proto_library.

deps: a list of dependency labels; must be cc_proto_library.

cc_libs: a list of other cc_library targets depended by the generated

cc_library.

include: a string indicating the include path of the .proto files.

protoc: the label of the protocol compiler to generate the sources.

internal_bootstrap_hack: a flag indicate the cc_proto_library is used only

for bootstraping. When it is set to True, no files will be generated.

The rule will simply be a provider for .proto files, so that other

cc_proto_library can depend on it.

use_grpc_plugin: a flag to indicate whether to call the grpc C++ plugin

when processing the proto files.

default_header: Controls the naming of generated rules. If True, the `name`

rule will be header-only, and an _impl rule will contain the

implementation. Otherwise the header-only rule (name + "_headers_only")

must be referred to explicitly.

**kargs: other keyword arguments that are passed to cc_library.

"""

includes = []

if include != None:

includes = [include]

if internal_bootstrap_hack:

# For pre-checked-in generated files, we add the internal_bootstrap_hack

# which will skip the codegen action.

proto_gen(

name = name + "_genproto",

srcs = srcs,

includes = includes,

protoc = protoc,

visibility = ["//visibility:public"],

deps = [s + "_genproto" for s in deps],

)

# An empty cc_library to make rule dependency consistent.

native.cc_library(

name = name,

**kargs

)

return

grpc_cpp_plugin = None

plugin_options = []

if use_grpc_plugin:

grpc_cpp_plugin = "//external:grpc_cpp_plugin"

if use_grpc_namespace:

plugin_options = ["services_namespace=grpc"]

gen_srcs = _proto_cc_srcs(srcs, use_grpc_plugin)

gen_hdrs = _proto_cc_hdrs(srcs, use_grpc_plugin)

outs = gen_srcs + gen_hdrs

proto_gen(

name = name + "_genproto",

srcs = srcs,

outs = outs,

gen_cc = 1,

includes = includes,

plugin = grpc_cpp_plugin,

plugin_language = "grpc",

plugin_options = plugin_options,

protoc = protoc,

visibility = ["//visibility:public"],

deps = [s + "_genproto" for s in deps],

)

if use_grpc_plugin:

cc_libs += select({

"//tensorflow:linux_s390x": ["//external:grpc_lib_unsecure"],

"//conditions:default": ["//external:grpc_lib"],

})

if default_header:

header_only_name = name

impl_name = name + "_impl"

else:

header_only_name = name + "_headers_only"

impl_name = name

native.cc_library(

name = impl_name,

srcs = gen_srcs,

hdrs = gen_hdrs,

deps = cc_libs + deps,

includes = includes,

**kargs

)

native.cc_library(

name = header_only_name,

deps = ["@protobuf_archive//:protobuf_headers"] + if_static([impl_name]),

hdrs = gen_hdrs,

**kargs

)

# Re-defined protocol buffer rule to bring in the change introduced in commit

# https://github.com/google/protobuf/commit/294b5758c373cbab4b72f35f4cb62dc1d8332b68

# which was not part of a stable protobuf release in 04/2018.

# TODO(jsimsa): Remove this once the protobuf dependency version is updated

# to include the above commit.

def py_proto_library(

name,

srcs = [],

deps = [],

py_libs = [],

py_extra_srcs = [],

include = None,

default_runtime = "@protobuf_archive//:protobuf_python",

protoc = "@protobuf_archive//:protoc",

use_grpc_plugin = False,

**kargs):

"""Bazel rule to create a Python protobuf library from proto source files

NOTE: the rule is only an internal workaround to generate protos. The

interface may change and the rule may be removed when bazel has introduced

the native rule.

Args:

name: the name of the py_proto_library.

srcs: the .proto files of the py_proto_library.

deps: a list of dependency labels; must be py_proto_library.

py_libs: a list of other py_library targets depended by the generated

py_library.

py_extra_srcs: extra source files that will be added to the output

py_library. This attribute is used for internal bootstrapping.

include: a string indicating the include path of the .proto files.

default_runtime: the implicitly default runtime which will be depended on by

the generated py_library target.

protoc: the label of the protocol compiler to generate the sources.

use_grpc_plugin: a flag to indicate whether to call the Python C++ plugin

when processing the proto files.

**kargs: other keyword arguments that are passed to cc_library.

"""

outs = _proto_py_outs(srcs, use_grpc_plugin)

includes = []

if include != None:

includes = [include]

grpc_python_plugin = None

if use_grpc_plugin:

grpc_python_plugin = "//external:grpc_python_plugin"

# Note: Generated grpc code depends on Python grpc module. This dependency

# is not explicitly listed in py_libs. Instead, host system is assumed to

# have grpc installed.

proto_gen(

name = name + "_genproto",

srcs = srcs,

outs = outs,

gen_py = 1,

includes = includes,

plugin = grpc_python_plugin,

plugin_language = "grpc",

protoc = protoc,

visibility = ["//visibility:public"],

deps = [s + "_genproto" for s in deps],

)

if default_runtime and not default_runtime in py_libs + deps:

py_libs = py_libs + [default_runtime]

native.py_library(

name = name,

srcs = outs + py_extra_srcs,

deps = py_libs + deps,

imports = includes,

**kargs

)

def tf_proto_library_cc(

name,

srcs = [],

has_services = None,

protodeps = [],

visibility = [],

testonly = 0,

cc_libs = [],

cc_stubby_versions = None,

cc_grpc_version = None,

j2objc_api_version = 1,

cc_api_version = 2,

dart_api_version = 2,

java_api_version = 2,

py_api_version = 2,

js_api_version = 2,

js_codegen = "jspb",

default_header = False):

js_codegen = js_codegen # unused argument

js_api_version = js_api_version # unused argument

native.filegroup(

name = name + "_proto_srcs",

srcs = srcs + tf_deps(protodeps, "_proto_srcs"),

testonly = testonly,

visibility = visibility,

)

use_grpc_plugin = None

if cc_grpc_version:

use_grpc_plugin = True

cc_deps = tf_deps(protodeps, "_cc")

cc_name = name + "_cc"

if not srcs:

# This is a collection of sub-libraries. Build header-only and impl

# libraries containing all the sources.

proto_gen(

name = cc_name + "_genproto",

protoc = "@protobuf_archive//:protoc",

visibility = ["//visibility:public"],

deps = [s + "_genproto" for s in cc_deps],

)

native.cc_library(

name = cc_name,

deps = cc_deps + ["@protobuf_archive//:protobuf_headers"] + if_static([name + "_cc_impl"]),

testonly = testonly,

visibility = visibility,

)

native.cc_library(

name = cc_name + "_impl",

deps = [s + "_impl" for s in cc_deps] + ["@protobuf_archive//:cc_wkt_protos"],

)

return

cc_proto_library(

name = cc_name,

testonly = testonly,

srcs = srcs,

cc_libs = cc_libs + if_static(

["@protobuf_archive//:protobuf"],

["@protobuf_archive//:protobuf_headers"],

),

copts = if_not_windows([

"-Wno-unknown-warning-option",

"-Wno-unused-but-set-variable",

"-Wno-sign-compare",

]),

default_header = default_header,

protoc = "@protobuf_archive//:protoc",

use_grpc_plugin = use_grpc_plugin,

visibility = visibility,

deps = cc_deps + ["@protobuf_archive//:cc_wkt_protos"],

)

def tf_proto_library_py(

name,

srcs = [],

protodeps = [],

deps = [],

visibility = [],

testonly = 0,

srcs_version = "PY2AND3",

use_grpc_plugin = False):

py_deps = tf_deps(protodeps, "_py")

py_name = name + "_py"

if not srcs:

# This is a collection of sub-libraries. Build header-only and impl

# libraries containing all the sources.

proto_gen(

name = py_name + "_genproto",

protoc = "@protobuf_archive//:protoc",

visibility = ["//visibility:public"],

deps = [s + "_genproto" for s in py_deps],

)

native.py_library(

name = py_name,

deps = py_deps + ["@protobuf_archive//:protobuf_python"],

testonly = testonly,

visibility = visibility,

)

return

py_proto_library(

name = py_name,

testonly = testonly,

srcs = srcs,

default_runtime = "@protobuf_archive//:protobuf_python",

protoc = "@protobuf_archive//:protoc",

srcs_version = srcs_version,

use_grpc_plugin = use_grpc_plugin,

visibility = visibility,

deps = deps + py_deps + ["@protobuf_archive//:protobuf_python"],

)

def tf_jspb_proto_library(**kwargs):

pass

def tf_nano_proto_library(**kwargs):

pass

def tf_proto_library(

name,

srcs = [],

has_services = None,

protodeps = [],

visibility = [],

testonly = 0,

cc_libs = [],

cc_api_version = 2,

cc_grpc_version = None,

dart_api_version = 2,

j2objc_api_version = 1,

java_api_version = 2,

py_api_version = 2,

js_api_version = 2,

js_codegen = "jspb",

provide_cc_alias = False,

default_header = False):

"""Make a proto library, possibly depending on other proto libraries."""

_ignore = (js_api_version, js_codegen, provide_cc_alias)

tf_proto_library_cc(

name = name,

testonly = testonly,

srcs = srcs,

cc_grpc_version = cc_grpc_version,

cc_libs = cc_libs,

default_header = default_header,

protodeps = protodeps,

visibility = visibility,

)

tf_proto_library_py(

name = name,

testonly = testonly,

srcs = srcs,

protodeps = protodeps,

srcs_version = "PY2AND3",

use_grpc_plugin = has_services,

visibility = visibility,

)

# A list of all files under platform matching the pattern in 'files'. In

# contrast with 'tf_platform_srcs' below, which seletive collects files that

# must be compiled in the 'default' platform, this is a list of all headers

# mentioned in the platform/* files.

def tf_platform_hdrs(files):

return native.glob(["platform/*/" + f for f in files])

def tf_platform_srcs(files):

base_set = ["platform/default/" + f for f in files]

windows_set = base_set + ["platform/windows/" + f for f in files]

posix_set = base_set + ["platform/posix/" + f for f in files]

# Handle cases where we must also bring the posix file in. Usually, the list

# of files to build on windows builds is just all the stuff in the

# windows_set. However, in some cases the implementations in 'posix/' are

# just what is necessary and historically we choose to simply use the posix

# file instead of making a copy in 'windows'.

for f in files:

if f == "error.cc":

windows_set.append("platform/posix/" + f)

return select({

"//tensorflow:windows": native.glob(windows_set),

"//conditions:default": native.glob(posix_set),

})

def tf_additional_lib_hdrs(exclude = []):

windows_hdrs = native.glob([

"platform/default/*.h",

"platform/windows/*.h",

"platform/posix/error.h",

], exclude = exclude)

return select({

"//tensorflow:windows": windows_hdrs,

"//conditions:default": native.glob([

"platform/default/*.h",

"platform/posix/*.h",

], exclude = exclude),

})

def tf_additional_lib_srcs(exclude = []):

windows_srcs = native.glob([

"platform/default/*.cc",

"platform/windows/*.cc",

"platform/posix/error.cc",

], exclude = exclude)

return select({

"//tensorflow:windows": windows_srcs,

"//conditions:default": native.glob([

"platform/default/*.cc",

"platform/posix/*.cc",

], exclude = exclude),

})

def tf_additional_minimal_lib_srcs():

return [

"platform/default/integral_types.h",

"platform/default/mutex.h",

]

def tf_additional_proto_hdrs():

return [

"platform/default/integral_types.h",

"platform/default/logging.h",

"platform/default/protobuf.h",

] + if_windows([

"platform/windows/integral_types.h",

])

def tf_additional_proto_compiler_hdrs():

return [

"platform/default/protobuf_compiler.h",

]

def tf_additional_proto_srcs():

return [

"platform/default/protobuf.cc",

]

def tf_additional_human_readable_json_deps():

return []

def tf_additional_all_protos():

return ["//tensorflow/core:protos_all"]

def tf_protos_all_impl():

return ["//tensorflow/core:protos_all_cc_impl"]

def tf_protos_all():

return if_static(

extra_deps = tf_protos_all_impl(),

otherwise = ["//tensorflow/core:protos_all_cc"],

)

def tf_protos_grappler_impl():

return ["//tensorflow/core/grappler/costs:op_performance_data_cc_impl"]

def tf_protos_grappler():

return if_static(

extra_deps = tf_protos_grappler_impl(),

otherwise = ["//tensorflow/core/grappler/costs:op_performance_data_cc"],

)

def tf_additional_cupti_wrapper_deps():

return ["//tensorflow/core/platform/default/gpu:cupti_wrapper"]

def tf_additional_device_tracer_srcs():

return ["platform/default/device_tracer.cc"]

def tf_additional_device_tracer_cuda_deps():

return []

def tf_additional_device_tracer_deps():

return []

def tf_additional_libdevice_data():

return []

def tf_additional_libdevice_deps():

return ["@local_config_cuda//cuda:cuda_headers"]

def tf_additional_libdevice_srcs():

return ["platform/default/cuda_libdevice_path.cc"]

def tf_additional_test_deps():

return []

def tf_additional_test_srcs():

return [

"platform/default/test_benchmark.cc",

] + select({

"//tensorflow:windows": [

"platform/windows/test.cc",

],

"//conditions:default": [

"platform/posix/test.cc",

],

})

def tf_kernel_tests_linkstatic():

return 0

def tf_additional_lib_defines():

"""Additional defines needed to build TF libraries."""

return []

def tf_additional_lib_deps():

"""Additional dependencies needed to build TF libraries."""

return [

"@com_google_absl//absl/base:base",

"@com_google_absl//absl/container:inlined_vector",

"@com_google_absl//absl/types:span",

"@com_google_absl//absl/types:optional",

] + if_static(

["@nsync//:nsync_cpp"],

["@nsync//:nsync_headers"],

)

def tf_additional_core_deps():

return select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_gcp_support": [],

"//conditions:default": [

"//tensorflow/core/platform/cloud:gcs_file_system",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_hdfs_support": [],

"//conditions:default": [

"//tensorflow/core/platform/hadoop:hadoop_file_system",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"//tensorflow/core/platform/s3:s3_file_system",

],

})

# TODO(jart, jhseu): Delete when GCP is default on.

def tf_additional_cloud_op_deps():

return select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_gcp_support": [],

"//conditions:default": [

"//tensorflow/contrib/cloud:bigquery_reader_ops_op_lib",

"//tensorflow/contrib/cloud:gcs_config_ops_op_lib",

],

})

# TODO(jart, jhseu): Delete when GCP is default on.

def tf_additional_cloud_kernel_deps():

return select({

"//tensorflow:android": [],

"//tensorflow:windows": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//conditions:default": [

"//tensorflow/contrib/cloud/kernels:bigquery_reader_ops",

"//tensorflow/contrib/cloud/kernels:gcs_config_ops",

],

})

def tf_lib_proto_parsing_deps():

return [

":protos_all_cc",

"//third_party/eigen3",

"//tensorflow/core/platform/default/build_config:proto_parsing",

]

def tf_lib_proto_compiler_deps():

return [

"@protobuf_archive//:protoc_lib",

]

def tf_additional_verbs_lib_defines():

return select({

"//tensorflow:with_verbs_support": ["TENSORFLOW_USE_VERBS"],

"//conditions:default": [],

})

def tf_additional_mpi_lib_defines():

return select({

"//tensorflow:with_mpi_support": ["TENSORFLOW_USE_MPI"],

"//conditions:default": [],

})

def tf_additional_gdr_lib_defines():

return select({

"//tensorflow:with_gdr_support": ["TENSORFLOW_USE_GDR"],

"//conditions:default": [],

})

def tf_py_clif_cc(name, visibility = None, **kwargs):

pass

def tf_pyclif_proto_library(

name,

proto_lib,

proto_srcfile = "",

visibility = None,

**kwargs):

pass

def tf_additional_binary_deps():

return ["@nsync//:nsync_cpp"] + if_cuda(

[

"//tensorflow/stream_executor:cuda_platform",

"//tensorflow/core/platform/default/build_config:cuda",

],

) + [

# TODO(allenl): Split these out into their own shared objects (they are

# here because they are shared between contrib/ op shared objects and

# core).

"//tensorflow/core/kernels:lookup_util",

"//tensorflow/core/util/tensor_bundle",

] + if_mkl_ml(

[

"//third_party/mkl:intel_binary_blob",

],

)

- tensorflow/tools/lib_package/BUILD

# Packaging for TensorFlow artifacts other than the Python API (pip whl).

# This includes the C API, Java API, and protocol buffer files.

package(default_visibility = ["//visibility:private"])

load("@bazel_tools//tools/build_defs/pkg:pkg.bzl", "pkg_tar")

load("@local_config_syslibs//:build_defs.bzl", "if_not_system_lib")

load("//tensorflow:tensorflow.bzl", "tf_binary_additional_srcs")

load("//tensorflow:tensorflow.bzl", "if_cuda")

load("//third_party/mkl:build_defs.bzl", "if_mkl")

genrule(

name = "libtensorflow_proto",

srcs = ["//tensorflow/core:protos_all_proto_srcs"],

outs = ["libtensorflow_proto.zip"],

cmd = "zip $@ $(SRCS)",

)

pkg_tar(

name = "libtensorflow",

extension = "tar.gz",

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

deps = [

":cheaders",

":clib",

":clicenses",

":eager_cheaders",

],

)

pkg_tar(

name = "libtensorflow_jni",

extension = "tar.gz",

files = [

"include/tensorflow/jni/LICENSE",

"//tensorflow/java:libtensorflow_jni",

],

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

deps = [":common_deps"],

)

# Shared objects that all TensorFlow libraries depend on.

pkg_tar(

name = "common_deps",

files = tf_binary_additional_srcs(),

tags = ["manual"],

)

pkg_tar(

name = "cheaders",

files = [

"//tensorflow/c:headers",

],

package_dir = "include/tensorflow/c",

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

)

pkg_tar(

name = "eager_cheaders",

files = [

"//tensorflow/c/eager:headers",

],

package_dir = "include/tensorflow/c/eager",

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

)

pkg_tar(

name = "clib",

files = ["//tensorflow:libtensorflow.so"],

package_dir = "lib",

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

deps = [":common_deps"],

)

pkg_tar(

name = "clicenses",

files = [":include/tensorflow/c/LICENSE"],

package_dir = "include/tensorflow/c",

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

tags = ["manual"],

)

genrule(

name = "clicenses_generate",

srcs = [

"//third_party/hadoop:LICENSE.txt",

"//third_party/eigen3:LICENSE",

"//third_party/fft2d:LICENSE",

"@boringssl//:LICENSE",

"@com_googlesource_code_re2//:LICENSE",

"@curl//:COPYING",

"@double_conversion//:LICENSE",

"@eigen_archive//:COPYING.MPL2",

"@farmhash_archive//:COPYING",

"@fft2d//:fft/readme.txt",

"@gemmlowp//:LICENSE",

"@gif_archive//:COPYING",

"@highwayhash//:LICENSE",

"@icu//:icu4c/LICENSE",

"@jpeg//:LICENSE.md",

"@llvm//:LICENSE.TXT",

"@lmdb//:LICENSE",

"@local_config_sycl//sycl:LICENSE.text",

"@nasm//:LICENSE",

"@nsync//:LICENSE",

"@png_archive//:LICENSE",

"@protobuf_archive//:LICENSE",

"@snappy//:COPYING",

"@zlib_archive//:zlib.h",

] + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"@aws//:LICENSE",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_gcp_support": [],

"//conditions:default": [

"@com_github_googlecloudplatform_google_cloud_cpp//:LICENSE",

],

}) + select({

"//tensorflow/core/kernels:xsmm": [

"@libxsmm_archive//:LICENSE.md",

],

"//conditions:default": [],

}) + if_cuda([

"@cub_archive//:LICENSE.TXT",

]) + if_mkl([

"//third_party/mkl:LICENSE",

"//third_party/mkl_dnn:LICENSE",

]) + if_not_system_lib(

"grpc",

[

"@grpc//:LICENSE",

"@grpc//third_party/nanopb:LICENSE.txt",

"@grpc//third_party/address_sorting:LICENSE",

],

),

outs = ["include/tensorflow/c/LICENSE"],

cmd = "$(location :concat_licenses.sh) $(SRCS) >$@",

tools = [":concat_licenses.sh"],

)

genrule(

name = "jnilicenses_generate",

srcs = [

"//third_party/hadoop:LICENSE.txt",

"//third_party/eigen3:LICENSE",

"//third_party/fft2d:LICENSE",

"@boringssl//:LICENSE",

"@com_googlesource_code_re2//:LICENSE",

"@curl//:COPYING",

"@double_conversion//:LICENSE",

"@eigen_archive//:COPYING.MPL2",

"@farmhash_archive//:COPYING",

"@fft2d//:fft/readme.txt",

"@gemmlowp//:LICENSE",

"@gif_archive//:COPYING",

"@highwayhash//:LICENSE",

"@icu//:icu4j/main/shared/licenses/LICENSE",

"@jpeg//:LICENSE.md",

"@llvm//:LICENSE.TXT",

"@lmdb//:LICENSE",

"@local_config_sycl//sycl:LICENSE.text",

"@nasm//:LICENSE",

"@nsync//:LICENSE",

"@png_archive//:LICENSE",

"@protobuf_archive//:LICENSE",

"@snappy//:COPYING",

"@zlib_archive//:zlib.h",

] + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_aws_support": [],

"//conditions:default": [

"@aws//:LICENSE",

],

}) + select({

"//tensorflow:android": [],

"//tensorflow:ios": [],

"//tensorflow:linux_s390x": [],

"//tensorflow:windows": [],

"//tensorflow:no_gcp_support": [],

"//conditions:default": [

"@com_github_googlecloudplatform_google_cloud_cpp//:LICENSE",

],

}) + select({

"//tensorflow/core/kernels:xsmm": [

"@libxsmm_archive//:LICENSE.md",

],

"//conditions:default": [],

}) + if_cuda([

"@cub_archive//:LICENSE.TXT",

]) + if_mkl([

"//third_party/mkl:LICENSE",

"//third_party/mkl_dnn:LICENSE",

]),

outs = ["include/tensorflow/jni/LICENSE"],

cmd = "$(location :concat_licenses.sh) $(SRCS) >$@",

tools = [":concat_licenses.sh"],

)

sh_test(

name = "libtensorflow_test",

size = "small",

srcs = ["libtensorflow_test.sh"],

data = [

"libtensorflow_test.c",

":libtensorflow.tar.gz",

],

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580

# are resolved, otherwise these rules break when built

# with Python 3.

# Till then, this test is explicitly executed when building

# the release by tensorflow/tools/ci_build/builds/libtensorflow.sh

tags = ["manual"],

)

sh_test(

name = "libtensorflow_java_test",

size = "small",

srcs = ["libtensorflow_java_test.sh"],

data = [

":LibTensorFlowTest.java",

":libtensorflow_jni.tar.gz",

"//tensorflow/java:libtensorflow.jar",

],

# Mark as "manual" till

# https://github.com/bazelbuild/bazel/issues/2352

# and https://github.com/bazelbuild/bazel/issues/1580