mit-acl / cadrl_ros Goto Github PK

View Code? Open in Web Editor NEWROS package for dynamic obstacle avoidance for ground robots trained with deep RL

ROS package for dynamic obstacle avoidance for ground robots trained with deep RL

How are you @mfe7?

I have found "Robot designed for socially acceptable navigation", and i read it.

I have a single question about diffusion map in page 48.

-> How to merge diffusion map and CADRL?

I understood that like under.

First using grid map for making Diffusion map, then input them into a MPC.

The MPC generate trajectories which are able to avoid collision with map.

A CADRL uses Trajectory, best to drive, for subgoal.

In this view, the CADRL is used for tracking module?

Thank you for reading this issue.

Hello, thanks for sharing your awesome work. I'm a beginner about this field and this is the first time I learn about it. I have been troubled by the above questions for a long time. I hope you can help me, thank you very much!

When I run**. / build_ docker. SH**, there is an error when pip install IPython = = 5.7 ipykannel = = 4.10 jupyter.

I don't how to solve it.

Thank you very much!

Can it run on Ubuntu 20.04 with noetic?

Hey Michael,

I try to open the datasets in the Dropbox folder but it doesn't work, can you give me a new website about it?

Thank you very much!

Happy new year to you!

Bing Han

@mfe7 Hi, professor. Your work was so wonderfully we loved the way you created your project. I got some errors while training in your model I would like to clarify with you. If you have time to discuss with me it might be a great helpfully for me to solve my problem. I like to make this conversation personal this is my Gmail id [email protected]. If you contact me to solve my problem it will be greatly helpful for my team. Thanks in advance for the help.

How can I obtain the initial training set that the paper say that is relased to the public?

I have two questions.

(1) Fundamentally, is it possible to avoid CADRL by reflecting newly updated obstacle information that is not included in the static map or dynamic objects other than people?

(2) If i have trained 4 agents environment , CADRL could act well 5 or more agents environment?

Hi, sorry to bother you again.

I find the test cases (preset_testCases, num_agents:10) you used. I run again using the checkpoint file(gym-collision-avoidance/gym_collision_avoidance/envs/policies/GA3C_CADRL/checkpoints/IROS18/network_01900000) you provided with vpref=1.0, DT=0.2. But it fails. I reduce the radius of the agent from 0.5 to 0.3, then it works but with a slightly different trajectory.

Is this normal or am I using the wrong parameter file?

Thanks for your help~

Hello :)

First of all, great job! It's absolutely amazing what you all did for this research.

As a total beginner in ROS, I would love it if you can clarify these for me:

Again, I am just beginning to ROS and would love to understand it a bit better :) I hope you don't mind clarifying these :D

Thank you!

I cannot find ford_msgs on ROS website, is it a customized package? Where can I get that package? Thx.

Hello Michael,

Would you be willing to share the code for the publisher of the "safe_actions" topic ?

Thanks in advance.

Nick Hetherington

Can ros packages use libraries installed in a conda env, or do we need to install it in the source?

Hi!

This is an amazing work!I want to use the social norm to optimize the reward function.

I wonder if there is any possible that you could tell me the value of scalar penalty when you train the network! I didn't find the Specific value in this paper.

Thank you!

Hi Michael,

I am working with cadrl for a few weeks now and I want to thank you for your amazing work.

Currently my turtlebot can navigate on a map while avoiding dynamic obstacles. (Basically following a global plan by sending subgaosl, similar to the youtube video: https://www.youtube.com/watch?v=CK1szio7PyA )

In the simulation I directly publish the Cluster msgs from the odom data of the obstacles. However I would like to generate them from sensor data for example the local costmap using the move base package.

So I wanted to know if you can give me a hint on how you generated the cluster msgs in ur example.

In Minute 1:16 you can see the jackal waiting for the lady to finish her order, meaning it is aware of the pillar in front of him. Are static obstacles from the map also published as cluster msgs ?

thanks in advance :)

Hello Michael,

Regarding the Clusters.msg, If I understand it right, the clusters msg contains information about the dynamic obstacles in the environment like their locations and velocities. if that is correct, how do you get this information? Do you use an object detection system like Yolo or something?

Thanks in advance.

hello, author, I try to run 'ga3c_cadrl_demo.py' and it prints 'action: [1.2 0.52359878]'. I understand that it means the next action of the host_agent and is that correct?

And how to get the figures (Fig. 4 and Fig. 6) in you paper if I run the code 'network.py'?

Hi!

Nice work! I want to train it in some new scenarios.

Is there any plan in near future to release the training code?

Thanks!

Hey Michael,

I'm trying to run the rosnode, but I get an error that the NNActions and PlannerMode messages do not exists. I also couldn't find them in the ford_msgs repo. Do I strictly need the messages or can I remove them from the code?

Kind regards, Ewoud

Hey Michael,

Thank you very much for your patience. Now there is a new problem in running the code 'launch/cadrl_node.launch'. I look forward to your answer.

The specific information of the bug is as follows:

launch/cadrl_node.launch: line 1: syntax error near unexpected token newline' launch/cadrl_node.launch: line 1: '

Thx. Kind regards.

Happy new year to you!

Bing Han

Hey Michael,

Firstly, according to the README.md, I replaced 'git clone https://bitbucket.org/acl-swarm/ford_msgs.git' with 'git clone https://bitbucket.org/acl-swarm/ford_msgs.git -b dev' because I can't find the dev branch and run 'catkin_make'. It successed.

Then I tried to run the './cadrl_node.py', but I got an error 'ImportError: No module named ford_msgs.msg' in line 7, 'from ford_msgs.msg import PedTrajVec, NNActions, PlannerMode, Clusters'.

So how can I do to solve the above two problems?

Thx. Kind regards.

Dear authors:

My research is focused on pedestrian obstacle avoidance algorithm. I have recently read your paper. I try to run 'network.py' and 'demo.py' but I found that there are all trained models, and I don't know how to train the network?

If I want to change some parameters and train this network, how should I do?

Thank you very much for your kind consideration and I am looking forward to your early reply.

Hello, Can I retrain this NNs directly in ros simulator such as gazebo ? But this repo doesn't seem to contain such training process.

Hi Michael,

Thanks for your great jobs.

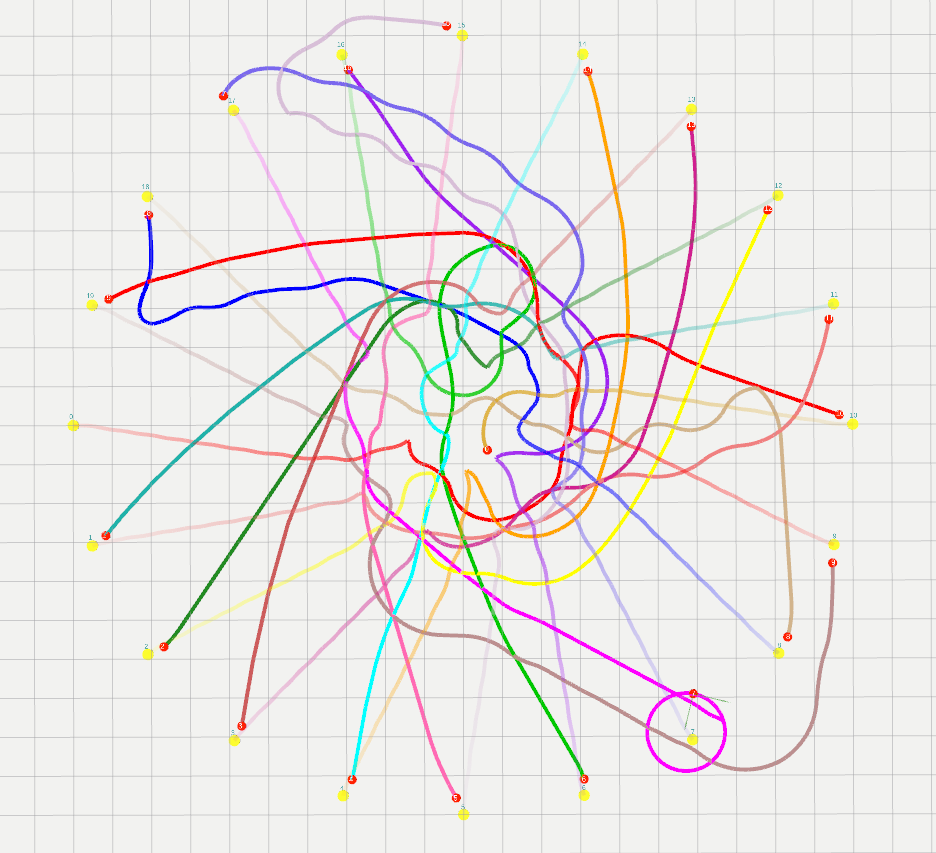

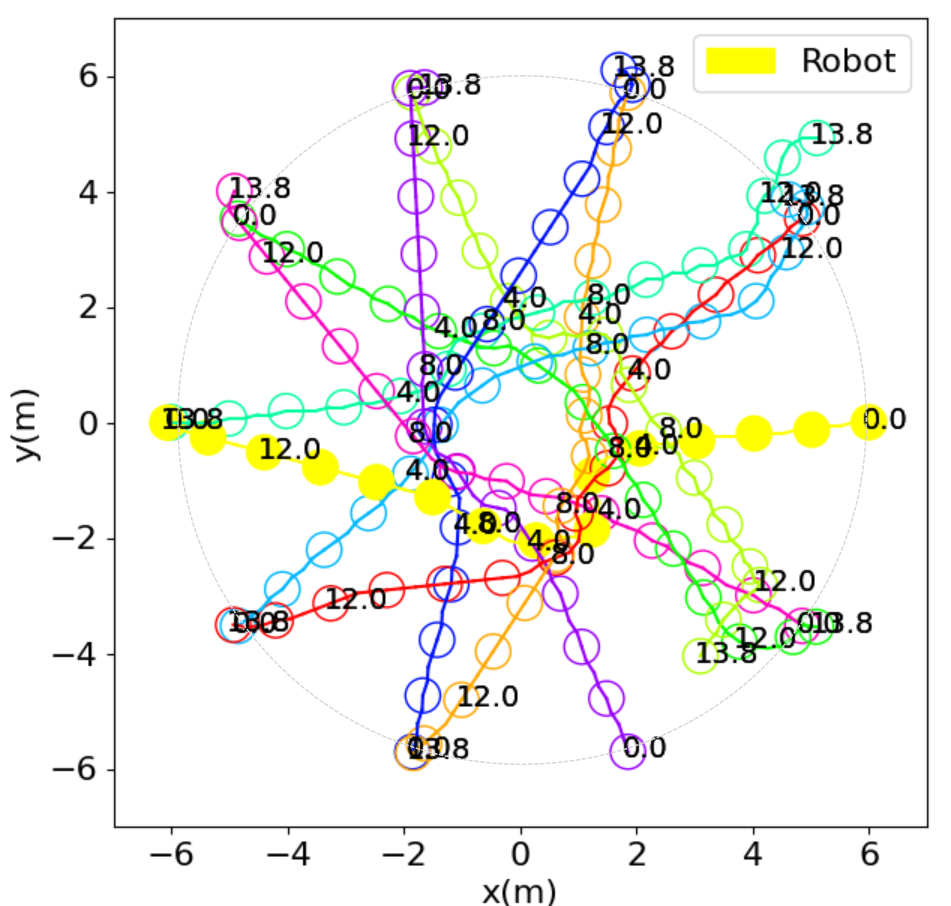

I am running this repo on Stage simulator, while the circle trajectories are not like the figure in README.md.

Here is the trajectory I run in Stage.

Some parameters of my experiments as follows:

The code details:

"""

poses:

all pose information of robots in the global coordinate system

poses[i, 0]: the ith robot position at the x-axis

poses[i, 1]: the ith robot position at the y-axis

poses[i, 2]: the ith robot heading angle

goals:

all goal position information in the global coordinate system

goals[i, 0]: the goal position of the ith robot at the x-axis

goals[i, 1]: the goal position of the ith robot at the y-axis

self.radius:

the radius of all robots (0.36m)

self.max_vx:

the maximum velocity of all robots (1m/s)

global_vels:

the velocity information of all robots in the global coordinate system

global_vels[i, 0]: the velocity of the ith robot at the x-axis

global_vels[i, 1]: the velocity of the ith robot at the y-axis

"""

obs_inputs = []

for i in range(self.num_agents):

robot = Agent(poses[i, 0], poses[i, 1],

goals[i, 0], goals[i, 1],

self.radius, self.max_vx,

poses[i, 2], 0

)

robot.vel_global_frame = np.array([global_vels[i, 0],

global_vels[i, 1]])

other_agents = []

index = 1

for j in range(len(poses)):

if i == j:

continue

other_agents.append(

Agent(poses[j, 0], poses[j, 1],

goals[j, 0], goals[j, 1],

self.radius, self.max_vx,

poses[j, 2], index

)

)

index += 1

obs_inputs.append(

robot.observe(other_agents)[1:]

)

actions = []

predictions = self.nn.predict_p(obs_inputs, None)

for i, p in enumerate(predictions):

raw_action = self.possible_actions.actions[np.argmax(p)]

actions.append(np.array([raw_action[0], raw_action[1]]))Do I misunderstand the code or wrongly set the parameter?

Looking forward to your reply : )

Hi,

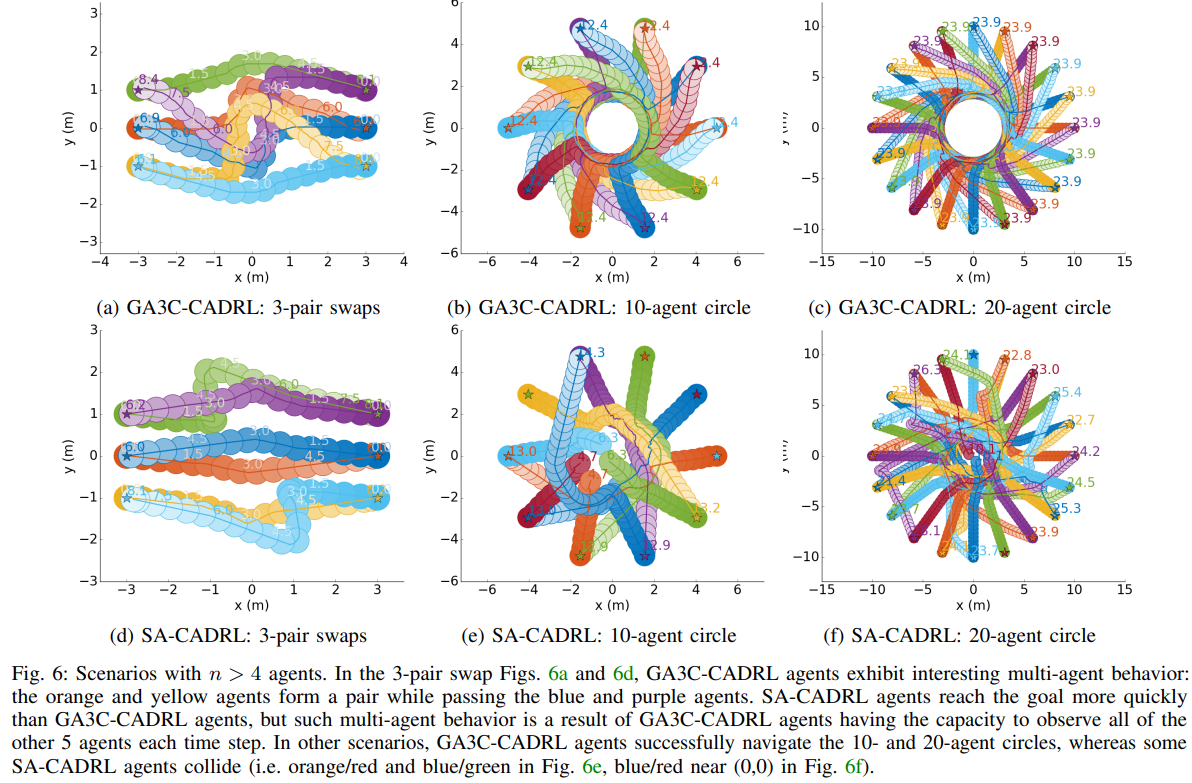

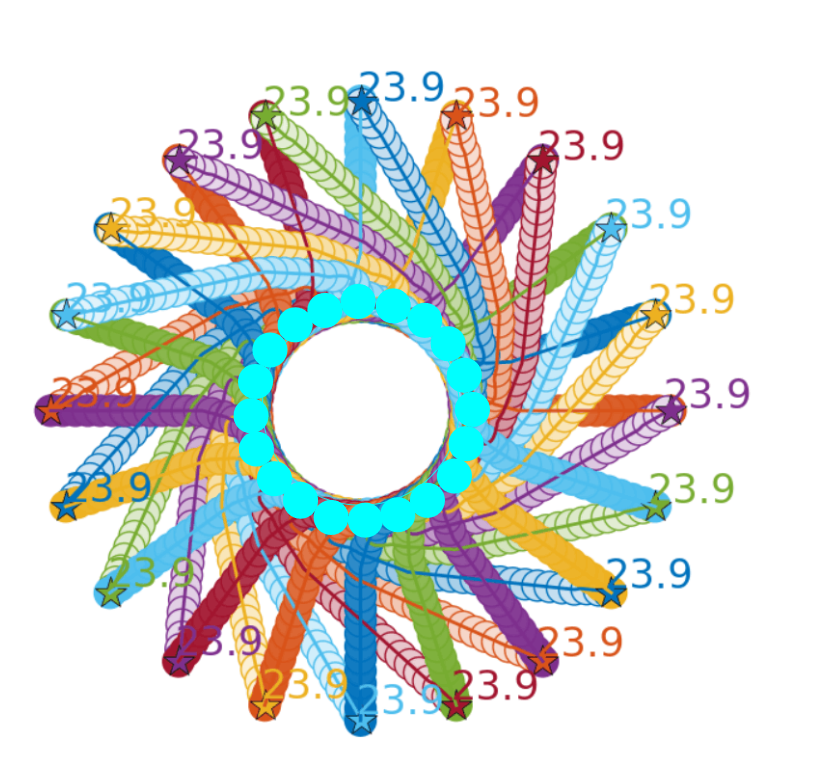

I find the results in your paper (GA3C-CADRL) are pretty good. And it surprises me that the results in Fig.6 (b)(c) is so perfect.

It seems that these agents have to move very carefully since they are very close to each other.

I try to replicate this result using the checkpoint file you provided, but only got some results like this ....

I wonder if it's because I need to retrain for this scene, or if it's because the car size or whatever it is isn't set up properly.

I really need your help. Thanks ~

Hi, I am very interested in this project and I really want to replicate the figures shown in the paper.

However, after I isntall the dependency and run the jupyter example code, I find the example is a little bit simple, which I mean it just show how to load the net and give a action prediction in a moment.

I wonder if there are some decumentation or code that show how to use the network, agent and util python file to draw a obstacle avoidance figure just like shown in the paper. I am also curious about how to use the data in the dataset you provided along with the already existed network module in the code to give some results.

Hope I describe my problems clearly. Thank you.

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.