#Introduction

This is the project repo for the final project of the Udacity Self-Driving Car Nanodegree: Programming a Real Self-Driving Car. For more information about the project, see the project introduction here.

| Name | Udacity Email | Location | Profile |

|---|---|---|---|

| Ahsan Habib (Team Lead) |

[email protected] | Santa Clara, CA |  https://www.linkedin.com/in/habibahsan |

| Krishna Koravadi | [email protected] | Rochester Hills, MI |  https://www.linkedin.com/in/koravadi |

| Mahmoud A Selim | [email protected] | Cairo, Egypt |  https://www.linkedin.com/in/mahmoud-selim |

| Sarang Kulkarni | [email protected] | San Jose, CA |  https://www.linkedin.com/in/sarangkulkarni/ |

| Vincent Barrault | [email protected] | Frankfurt, Germany |  https://www.linkedin.com/in/vincent-barrault |

Here is the simulation run on highway that uses waypoint path planning, PID controller for controlling speed, steering, and throttle. In this simulation, traffic light waypoint is used to detect the status of light and the car is controlled to stop on red light and go on green.

Click on the video to see a complete run. Note: Our code is able to drive the vehicle and complete the highway loop with camera turned OFF in the simulator, when the camera is turned ON with no classification runnning and no suscription to the camera data around 4000 waypoint index the vehcile goes out of control. We think that this may be due to less computation prower and priority of the node execution issue.

Here is the simulation run at test site that uses waypoint path planning, PID controller for controlling speed, steering, and throttle.

Click on the video to see a complete run. Note: To make the simulation work for the test site, we need to add these two lines to the site.launch file, so that all the nodes get the vehicle position and velocity from the simulator.

<!-- Simulator Bridge -->

<include file="$(find styx)/launch/server.launch" />

For classifying the traffic light state (green, red, yellow or unknown), we used already existing models from Tensorflow detection library. Different models are available. We looked at the following ones:

- faster_rcnn_inception_v2_\coco

- ssd_inception_v2_coco

- ssd_mobilenet_v1_coco

faster_rncc is very accurate but is very long to process images. ssd_mobilenet_v1_coco is a lot faster but its accuracy is pretty low. Consequently, ssd_inception_v2_coco is a good compromise between processing time and good accuracy. This model was chosen for the project. However, it doesn't detect traffic light colors. The model thus must be trained for detecting other shapes/patterns.

A dataset of ~1200 images has been gathered (mainly from the ROS bag provided by Udacity) and labeled using labelimg.py. 80% were used for training, 20% for testing.

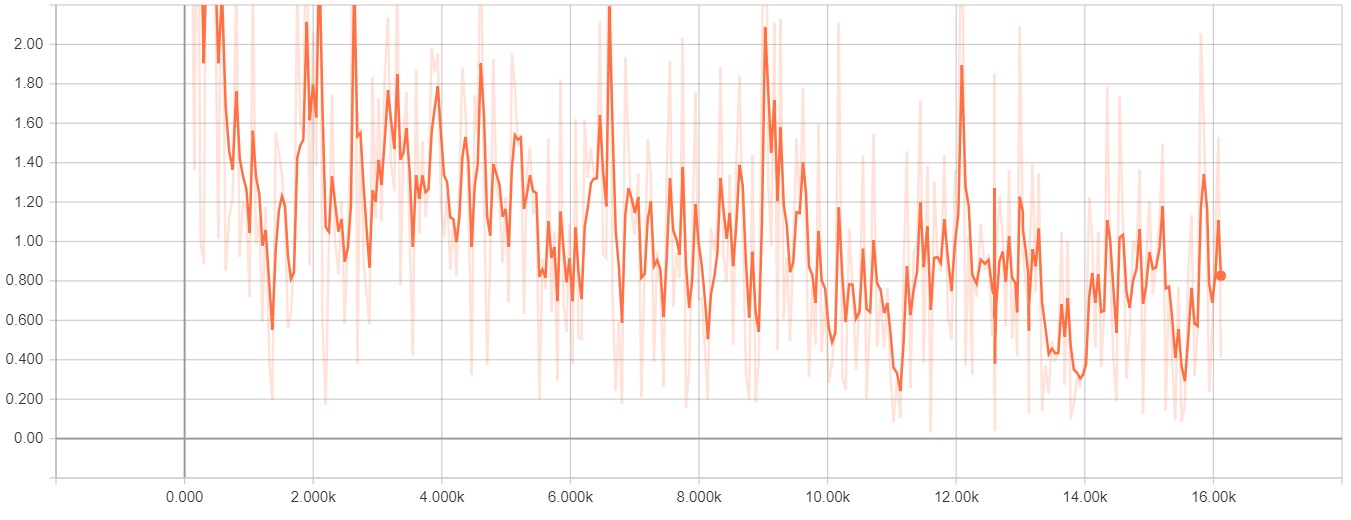

Using tensorflow-gpu, the model has been trained locally on a computer equipped with a NVIDIA GPU (GTX 1050) during around 16000 steps, till the loss is almost always under 2:

The classifier code is located in tl_classifier.py and returns its result to tl_detector.py.

When the classifier finds the class corresponding to a color, it looks at the probability (or score) that this prediction is correct. This minimum score is 0.45. If the score is below this, the classifier returns that no traffic light state was found.

After the color is return, the detector does not actualize directly the state of the traffic light. It requires that at least 4 images in a row returns the same prediction/color before actualizing the light state. It might happen that the classifier classifies erroneously 1 or 2 images from time to time and this feature avoids that the light state changes because of wrong classifying.

After running the classifier on roughly 2400 images collected from traffic ros bag, we reviewed the outcome. Here are the summary:

| Color or Classification | Number of images |

|---|---|

| Green | 1063 |

| Red | 409 |

| Yellow | 288 |

| Other (not a light) | 493 |

| Incorrect | 126 |

This gives total accuracy of 94.7%

A few example of correct classification:

Traffic light images correctly classified with a very high confidence even some of them were far and not very clear.

Some traffic light images are classified with a very low confidence. Possible reasons are the distance of camera from the light, which makes the light not clear in the images or brightness of sun is making it hard to distinguish between traffic signals.

-

Be sure that your workstation is running Ubuntu 16.04 Xenial Xerus or Ubuntu 14.04 Trusty Tahir. Ubuntu downloads can be found here.

-

If using a Virtual Machine to install Ubuntu, use the following configuration as minimum:

- 2 CPU

- 2 GB system memory

- 25 GB of free hard drive space

The Udacity provided virtual machine has ROS and Dataspeed DBW already installed, so you can skip the next two steps if you are using this.

-

Follow these instructions to install ROS

- ROS Kinetic if you have Ubuntu 16.04.

- ROS Indigo if you have Ubuntu 14.04.

-

- Use this option to install the SDK on a workstation that already has ROS installed: One Line SDK Install (binary)

-

Download the Udacity Simulator.

Build the docker container

docker build . -t capstoneRun the docker file

docker run -p 4567:4567 -v $PWD:/capstone -v /tmp/log:/root/.ros/ --rm -it capstone- Clone the project repository

git clone https://github.com/udacity/CarND-Capstone.git- Install python dependencies

cd CarND-Capstone

pip install -r requirements.txt- Make and run styx

cd ros

catkin_make

source devel/setup.sh

roslaunch launch/styx.launch- Run the simulator

- Download training bag that was recorded on the Udacity self-driving car.

- Unzip the file

unzip traffic_light_bag_file.zip- Play the bag file

rosbag play -l traffic_light_bag_file/traffic_light_training.bag- Launch your project in site mode

cd CarND-Capstone/ros

roslaunch launch/site.launch- Confirm that traffic light detection works on real life images