Hi,

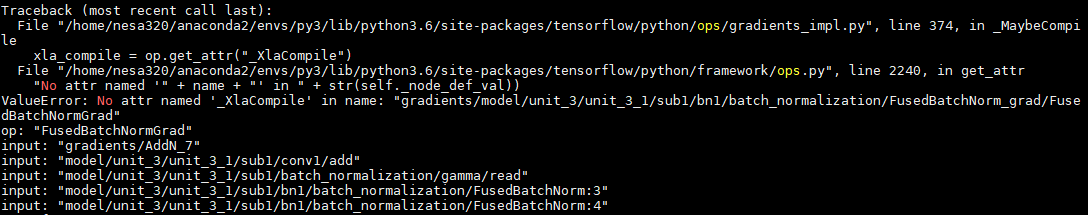

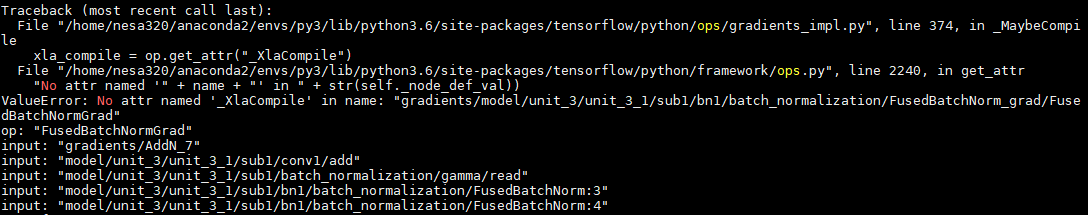

I'm implementing your input gradient regularization on my model ResNet20V1 for CIFAR10 dataset. I met an error:

Here is my main code:

x_nat = tf.placeholder(tf.float32,(None,32,32,3))

y = tf.placeholder(tf.float32,(None,10))

lamda = 100

logits_nat = model._build_model(x_nat)

preds_nat = tf.nn.softmax(logits_nat)

loss_1 = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=logits_nat))

loss_2 = tf.nn.l2_loss(tf.gradients(tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=logits_nat)), x_nat)[0])

total_loss = loss_1 + loss_2 * lamda

optimizer = tf.train.AdamOptimizer(learning_rate=0.0002, epsilon=1e-4)

gradients, variables = zip(*optimizer.compute_gradients(total_loss))

train_step = optimizer.apply_gradients(zip(gradients, variables))

And here is my model architecture:

def _build_model(self, x_input):

with tf.variable_scope('model', reuse=tf.AUTO_REUSE):

with tf.variable_scope('input'):

ch = x_input.get_shape().as_list()[3]

x = self._conv('init_conv', x_input, 3, ch, 16, self._stride_arr(1))

x = self._batch_norm('init_bn', x)

x = self._relu(x)

res_func = self._residual

filters = [16, 32, 64]

with tf.variable_scope('unit_1'):

with tf.variable_scope('unit_1_1'):

x = res_func(x, filters[0], filters[0], self._stride_arr(1))

with tf.variable_scope('unit_1_2'):

x = res_func(x, filters[0], filters[0], self._stride_arr(1))

with tf.variable_scope('unit_1_3'):

x = res_func(x, filters[0], filters[0], self._stride_arr(1))

with tf.variable_scope('unit_2'):

with tf.variable_scope('unit_2_1'):

x = res_func(x, filters[0], filters[1], self._stride_arr(2))

with tf.variable_scope('unit_2_2'):

x = res_func(x, filters[1], filters[1], self._stride_arr(1))

with tf.variable_scope('unit_2_3'):

x = res_func(x, filters[1], filters[1], self._stride_arr(1))

with tf.variable_scope('unit_3'):

with tf.variable_scope('unit_3_1'):

x = res_func(x, filters[1], filters[2], self._stride_arr(2))

with tf.variable_scope('unit_3_2'):

x = res_func(x, filters[2], filters[2], self._stride_arr(1))

with tf.variable_scope('unit_3_3'):

x = res_func(x, filters[2], filters[2], self._stride_arr(1))

with tf.variable_scope('unit_last'):

x = self._avg_pool(x, 8)

with tf.variable_scope('logit'):

x = self._fully_connected(x, 10)

return x

def _batch_norm(self, name, x):

"""Batch normalization."""

with tf.name_scope(name):

return tf.layers.batch_normalization(

inputs=x,

training=(self.mode == 'train'))

def _residual(self, x, in_filter, out_filter, stride):

"""Residual unit with 2 sub layers."""

orig_x = x

with tf.variable_scope('sub1'):

x = self._conv('conv1', x, 3, in_filter, out_filter, stride)

x = self._batch_norm('bn1', x)

x = self._relu(x)

with tf.variable_scope('sub2'):

x = self._conv('conv2', x, 3, out_filter, out_filter, self._stride_arr(1))

#x = self._batch_norm('bn2', x)

with tf.variable_scope('sub_add'):

if in_filter != out_filter:

y = self._conv('conv_match', orig_x, 1, in_filter, out_filter, stride)

else:

y = orig_x

z = x + y

z = self._relu(z)

def _conv(self, name, x, filter_size, in_filters, out_filters, strides):

"""Convolution."""

with tf.variable_scope(name):

n = filter_size * filter_size * out_filters

kernel = tf.get_variable('DW', [filter_size, filter_size, in_filters, out_filters],tf.float32, initializer=tf.keras.initializers.he_normal(), regularizer=tf.keras.regularizers.l2(l=1e-4))

bias = tf.get_variable('biases', [out_filters], initializer=tf.constant_initializer())

conv = tf.nn.conv2d(x, kernel, strides, padding='SAME')

result = conv + bias

return result

def _relu(self, x, leakiness=0.0):

"""Relu, with optional leaky support."""

return tf.where(tf.less(x, 0.0), leakiness * x, x, name='leaky_relu')

def _fully_connected(self, x, out_dim):

"""FullyConnected layer for final output."""

num_non_batch_dimensions = len(x.shape)

prod_non_batch_dimensions = 1

for ii in range(num_non_batch_dimensions - 1):

prod_non_batch_dimensions *= int(x.shape[ii + 1])

x = tf.reshape(x, [tf.shape(x)[0], -1])

w = tf.get_variable(

'DW', [prod_non_batch_dimensions, out_dim],

initializer=tf.keras.initializers.he_normal())

b = tf.get_variable('biases', [out_dim],

initializer=tf.constant_initializer())

result = tf.nn.xw_plus_b(x, w, b)

return result

def _avg_pool(self, x, pool_size):

return tf.nn.avg_pool(x, ksize=[1,pool_size,pool_size,1], strides=[1,pool_size,pool_size,1], padding='VALID')

The error happens when the train_step line is executed. Seems that if I remove the BN layers in the residual block it works well. I also have BN layers in the main architecture of my model, and it works well. Does your double backpropagation apply to BN layers in the residual block? Looking forward to your reply!