lizhenwangt / normalgan Goto Github PK

View Code? Open in Web Editor NEWNormalGAN: Learning Detailed 3D Human from a Single RGB-D Image (ECCV 2020)

NormalGAN: Learning Detailed 3D Human from a Single RGB-D Image (ECCV 2020)

Hello!

I've tested your project and the sample data runs perfectly. However, I encountered some issues when testing with my own data.

I've made the following modifications:

low_thres and up_thres to match my depth rangefx, fy, cx, and cy according to my depth camera's intrinsic parametersDespite these changes, I'm still only getting a few dozen valid pixels (np.sum(mask_pc)), which is insufficient to produce results.

I'm wondering if there's an error in my approach to modifying these parameters? Or are there additional changes I need to make?

Your assistance would be greatly appreciated! Thank you for your time.

Hi, Wang

Thank you very much for your excellent work.

I want to run NormalGAN on data from Azure Kinect, does it work for Azure Kinect?

Thank you!

Hello, amazing work, really love it, because i don't have a kinect, can I use the result of sigle view depth estimation methods instead of kinectV2 depth?

Hi, Wang

I really appreciate your great work!

I want to run NormalGAN on my own KinectV2 data, but met some problems:

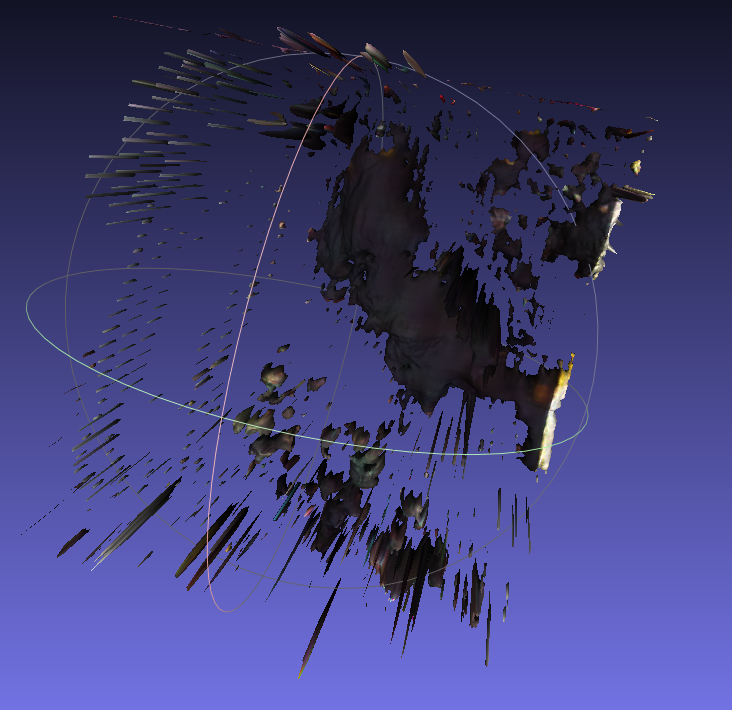

The output is so terrible, and it looks even not like a human at all...

I tried to put many frames into NomalGAN, and also did some simple denoising, but the outputs nearly kept the same...(T_T)

My color map: size: 512 * 424, type: CV_8UC3 (24bit);

My depth map: size: 512 * 424, type: CV_16UC1 (16bit);

Here are my maps and output:

Your NoramalGAN is so magic and I really want to play it well on my data.

So I hope you can give me some advice. Thanks a lot!

Best wishes

Great work!

I am tring to get the mesh of my pictures taken by ipad Lidar. https://developer.apple.com/forums/thread/663995.I get the intrinsics here,but I could not get the right mesh, the images in result/cz0,cz1,dz0,dz1 are all incorrect. when I test your data, the result is correct.https://developer.apple.com/forums/thread/663995

Hi! Thank you very much for your excellent work. I've got great reconstruction. However, I have a question how to get the depth map corresponding to the RGB picture?

Hi,

Interesting work. The supplementary section mentions that the front-view depth rectification network outputs a 1D rectified depth image and a 1D binary mask of the orthographic view. How does the mask help in learning depth image? Would I get the same results if I train the network without predicting the orthographic mask?

First of all, this is very interesting research. Thank you so much for sharing the repository! I am trying to play around with the approach on my own data. Is it possible for you to share your training code?

how to downing pretrained models and test ?

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.