An Open-Source Framework for Prompt-learning.

Overview • Installation • How To Use • Docs • Paper • Citation • Performance •

What's New?

- Dec 2021:

pip install openprompt - Dec 2021: SuperGLUE performance are added

- Dec 2021: We support generation paradigm for all tasks by adding a new verbalizer:GenerationVerbalizer and a tutorial: 4.1_all_tasks_are_generation.py

- Nov 2021: Now we have released a paper OpenPrompt: An Open-source Framework for Prompt-learning.

- Nov 2021 PrefixTuning supports t5 now.

- Nov 2021: We made some major changes from the last version, where a flexible template language is newly introduced! Part of the docs is outdated and we will fix it soon.

Overview

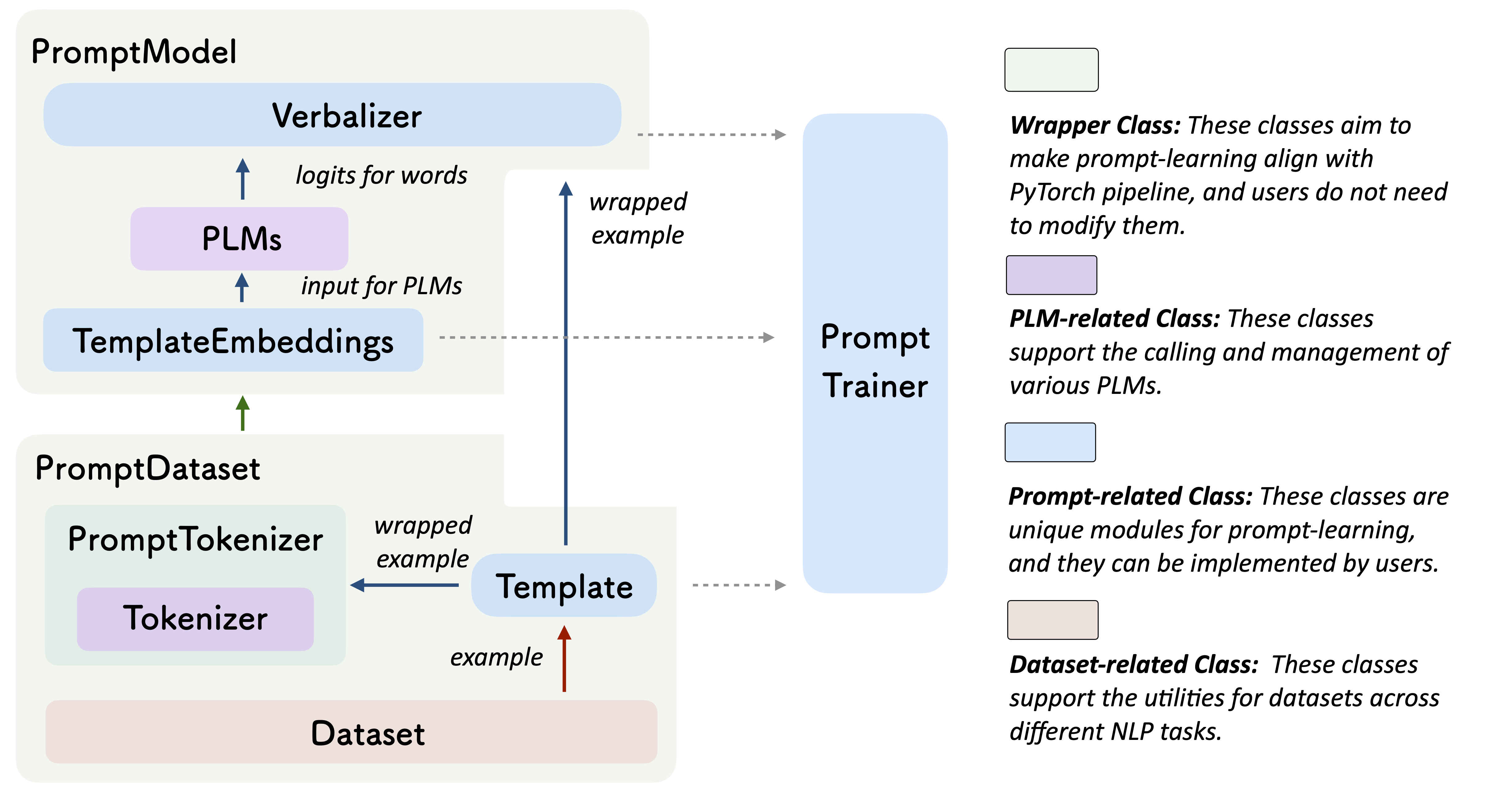

Prompt-learning is the latest paradigm to adapt pre-trained language models (PLMs) to downstream NLP tasks, which modifies the input text with a textual template and directly uses PLMs to conduct pre-trained tasks. This library provides a standard, flexible and extensible framework to deploy the prompt-learning pipeline. OpenPrompt supports loading PLMs directly from huggingface transformers. In the future, we will also support PLMs implemented by other libraries. For more resources about prompt-learning, please check our paper list.

What Can You Do via OpenPrompt?

- Use the implementations of current prompt-learning approaches.* We have implemented various of prompting methods, including templating, verbalizing and optimization strategies under a unified standard. You can easily call and understand these methods.

- Design your own prompt-learning work. With the extensibility of OpenPrompt, you can quickly practice your prompt-learning ideas.

Installation

Using Pip

Our repo is tested on Python 3.6+ and PyTorch 1.8.1+, install OpenPrompt using pip as follows:

pip install openpromptTo play with the latest features, you can also install OpenPrompt from the source.

Using Git

Clone the repository from github:

git clone https://github.com/thunlp/OpenPrompt.git

cd OpenPrompt

pip install -r requirements.txt

python setup.py installModify the code

python setup.py develop

Use OpenPrompt

Base Concepts

To use OpenPrompt you are required to instantiate a number of base classes to intiate a training process with Pytorch.

Introduction by a Simple Example

With the modularity and flexibility of OpenPrompt, you can easily develop a prompt-learning pipeline.

Step 1: Define a task

The first step is to determine the current NLP task, think about what input infers data that is important to you. Determine the classses and the InputExample of the task. For simplicity, we use Sentiment Analysis as an example. tutorial_task.

from openprompt.data_utils import InputExample

classes = [ # There are two classes in Sentiment Analysis, one for negative and one for positive

"negative",

"positive"

]

dataset = [ # For simplicity, there's only two examples

# text_a is the input text of the data, some other datasets may have multiple input sentences in one example.

InputExample(

guid = 0,

text_a = "Albert Einstein was one of the greatest intellects of his time.",

),

InputExample(

guid = 1,

text_a = "The film was badly made.",

),

]Step 2: Define a Pre-trained Language Model (PLM)

Choose a PLM to support your task. Models have attributes which can be expressed as key value pairs, one to many relationships. Here plm, tokenizer, model_config, are declared, and WrapperClass is assigned to the value of load_plm. You must define OpenPrompt is compatible with models on huggingface.

from openprompt.plms import load_plm

plm, tokenizer, model_config, WrapperClass = load_plm("bert", "bert-base-cased")Step 3: Define a ManualTemplate Instance

The ManualTemplate class is a modifier of the original input text.

We have defined text_a in Step 1.

from openprompt.prompts import ManualTemplate

promptTemplate = ManualTemplate(

text = '{"placeholder":"text_a"} It was {"mask"}',

tokenizer = tokenizer,

)Step 4: Define a ManualVerbalizer Instance

The ManualVerbalizer class projects the original labels which are defined in te initial classes list. The classes list values are required to be included as keys in the label words dictionary. Keys are projected in a one to many relationship to the values.

from openprompt.prompts import ManualVerbalizer

promptVerbalizer = ManualVerbalizer(

classes = classes,

label_words = {

"negative": ["bad"],

"positive": ["good", "wonderful", "great"],

},

tokenizer = tokenizer,

)Step 5: Define the PromptForClassification Instance

The PromptForClassification requires 3 values as parameters.The PLM stored as WrapperClass, a ManualTemplate instance, and a ManualVerbalizer instance.

from openprompt import PromptForClassification

promptModel = PromptForClassification(

template = promptTemplate,

plm = plm,

verbalizer = promptVerbalizer,

)Step 6: Define a PromptDataLoader Instance

The PromptDataLoader essentially a prompt version of pytorch Dataloader batches the dataset for training. This class requires 4 arguments ManualTemplate, a PromtForClassification class and the declared WrapperClass.

from openprompt import PromptDataLoader

data_loader = PromptDataLoader(

dataset = dataset,

tokenizer = tokenizer,

template = promptTemplate,

tokenizer_wrapper_class=WrapperClass,

)Step 7: Train and inference

Done! We can conduct training and inference the same as other processes in Pytorch.

# making zero-shot inference using pretrained MLM with prompt

promptModel.eval()

with torch.no_grad():

for batch in data_loader:

logits = promptModel(batch)

preds = torch.argmax(logits, dim = -1)

print(classes[preds])

# predictions would be 1, 0 for classes 'positive', 'negative'Please refer to our tutorial scripts, and documentation for more details.

Datasets

We provide a series of download scripts in the dataset/ folder, feel free to use them to download benchmarks.

Performance Report

There are too many possible combinations powered by OpenPrompt. We are trying our best to test the performance of different methods as soon as possible. The performance will be constantly updated into the Tables. We also encourage the users to find the best hyper-parameters for their own tasks and report the results by making pull request.

Known Issues

Major improvement/enhancement in future.

- We made some major changes from the last version, so part of the docs is outdated. We will fix it soon.

Citation

Please cite our paper if you use OpenPrompt in your work

@article{ding2021openprompt,

title={OpenPrompt: An Open-source Framework for Prompt-learning},

author={Ding, Ning and Hu, Shengding and Zhao, Weilin and Chen, Yulin and Liu, Zhiyuan and Zheng, Hai-Tao and Sun, Maosong},

journal={arXiv preprint arXiv:2111.01998},

year={2021}

}Contributors

We thank all the contributors to this project, more contributors are welcome!