- Download and install Ollama

- Pull down a model (or a few) from the library Ex:

ollama pull llava - Run this in your terminal

OLLAMA_ORIGINS=*.github.io ollama serve - Use your browser to go to LLM-X

- Start chatting!

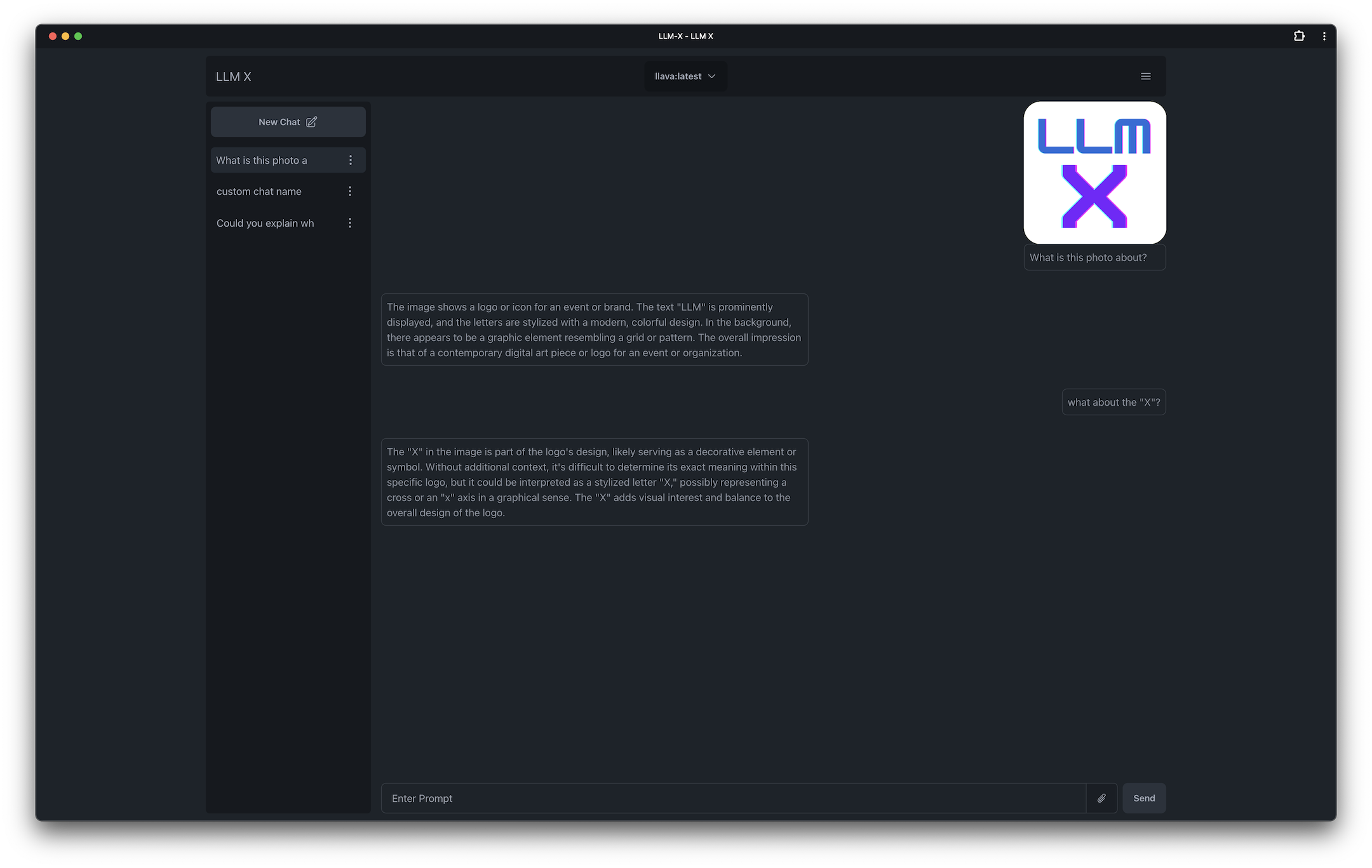

| Conversation about logo |

|---|

|

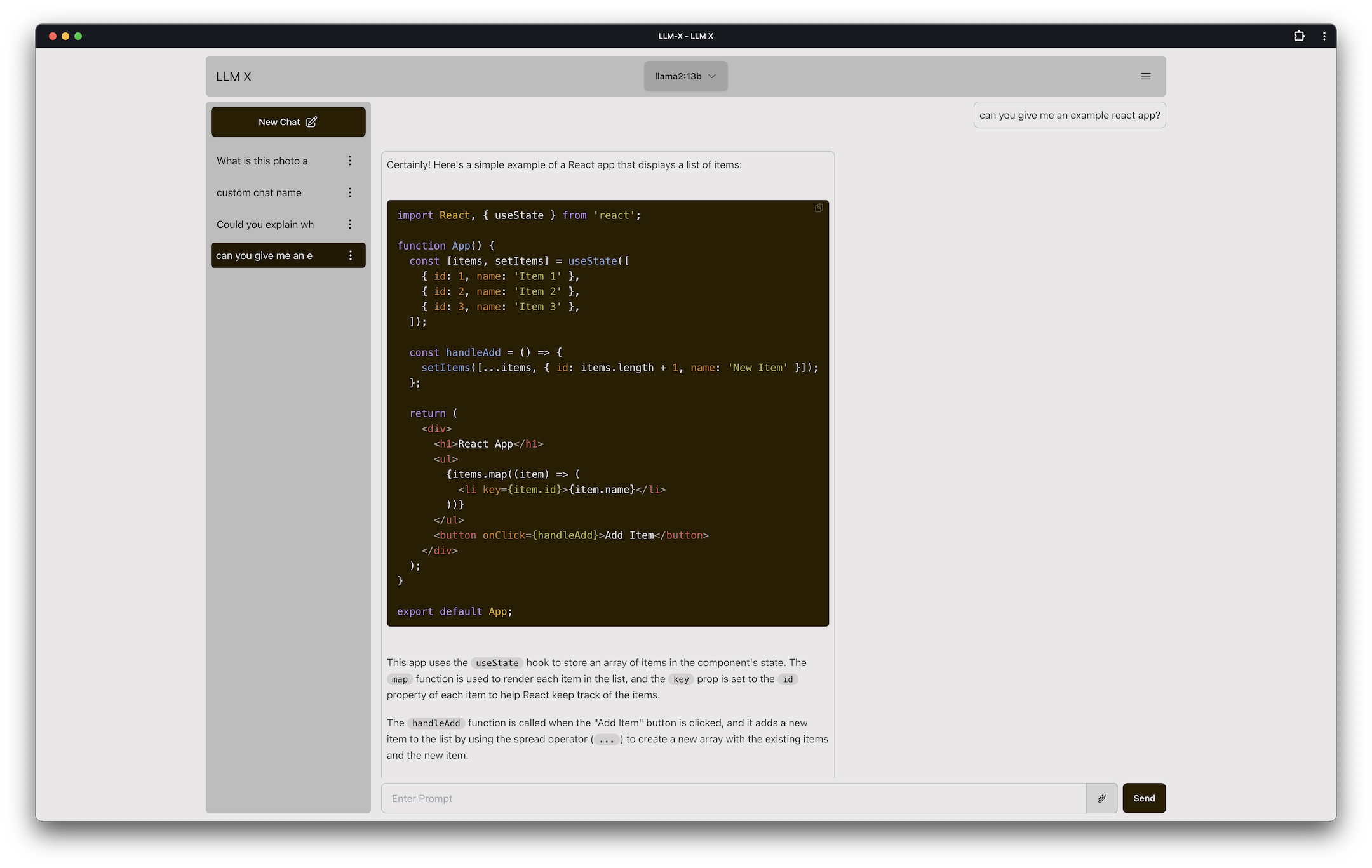

| Showing off code and light theme |

|---|

|

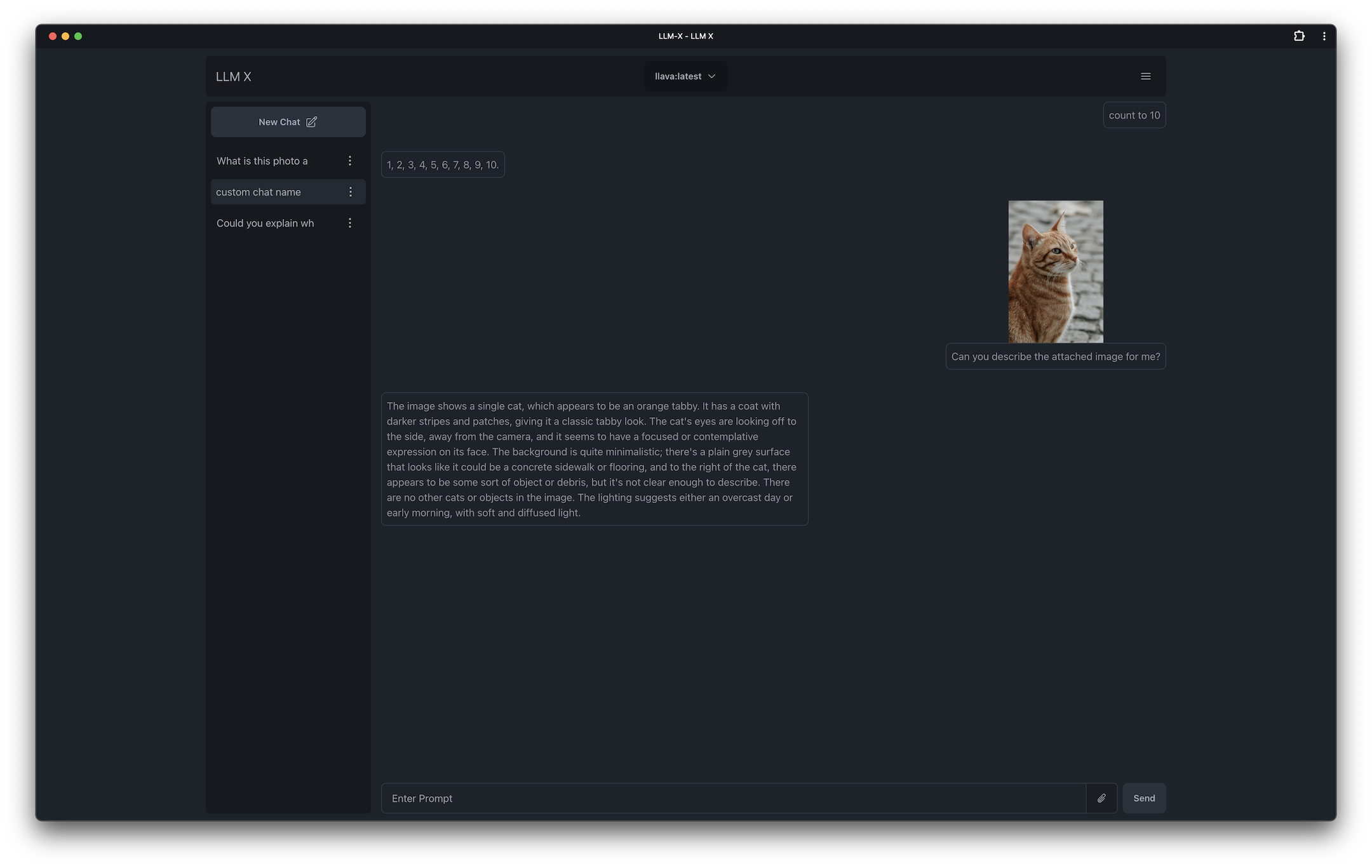

| Responding about a cat |

|---|

|

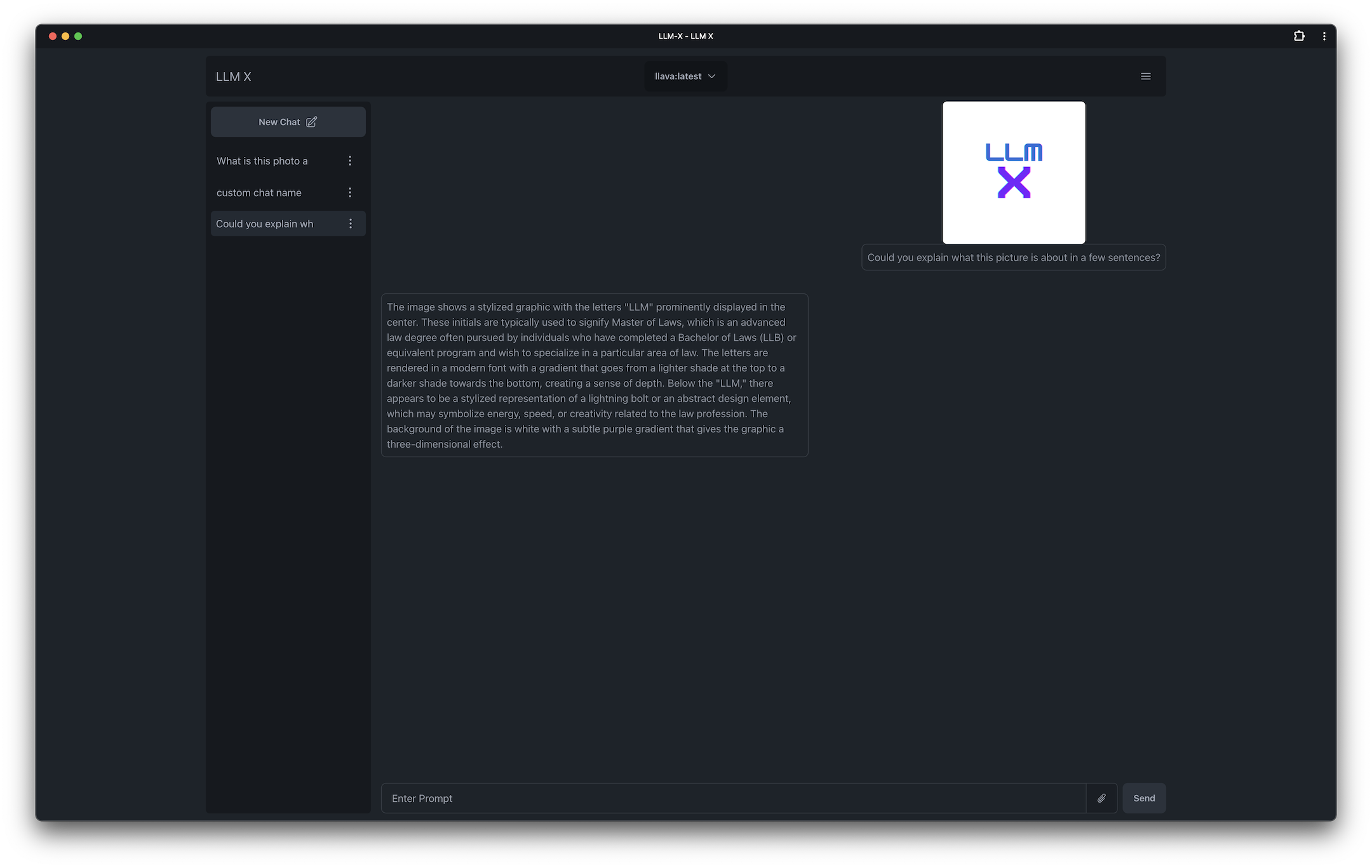

| Another logo response |

|---|

|

What is this? Chat GPT style UI for the niche group of folks who run Ollama (think of this like an offline chat gpt) locally. Supports sending images and text! WORKS OFFLINE through PWA (Progressive Web App) standards (its not dead!)

Why do this? I have been interested in LLM UI for a while now and this seemed like a good intro application. I've been introduced to a lot of modern technologies thanks to this project as well, its been fun!

Why so many buzz words? I couldn't help but bee cool 😎

Logic helpers:

UI Helpers:

Project setup helpers:

Inspiration: ollama-ui's project. Which allows users to connect to ollama via a web app

Perplexity.ai Perpexlity has sone some amazing UI advancements in the LLM UI space and I have been very interested in getting to that point. Hopefully this starter project lets me get closer to doing something similar!

Clone the project, and run npm install in the root directory

npm run dev starts a local instance and opens up a browser tab under https:// (for PWA reasons)

- Text Entry and Response to Ollama

- Conversation history

- Ability to manage multiple chats

- Code highlighting with Highlight.js

- Ability to copy responses from Ollama

- Image to text using Ollama's multi modal abilities

- Offline Support via PWA technology

- Add Screenshots because no one is going to read these

- Refresh LLM response button

- Re-write user message (triggering LLM refresh)

- Bot "Personas" allow users to override the bot's system message

-

LangChain.js was attempted while spiking on this app but unfortunately it was not set up correctly for stopping incoming streams, I hope this gets fixed later in the future OR if possible a custom LLM Agent can be utilized in order to use LangChain

-

Originally I used create-react-app 👴 while making this project without knowing it is no longer maintained, I am now using Vite. 🤞 This already allows me to use libs like

ollama-jsthat I could not use before. Will be testing more with langchain very soon -

This readme was written with https://stackedit.io/app

-

Changes to the main branch trigger an immediate deploy to https://mrdjohnson.github.io/llm-x/