Comments (14)

I assume you run PyTorch and Turbo in the same context. Multiple Threading mechanics may conflict with each other. Could you please try it again using scripts in ./benchmark.

from turbotransformers.

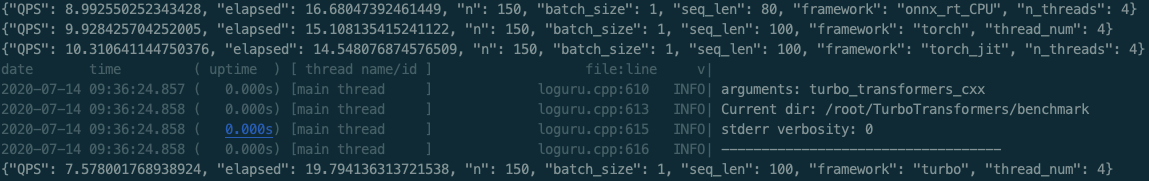

Thanks for your reply! This is the screenshot of running the benchmark.sh, Turbo still got the slowest QPS. The CPU is Intel(R) Xeon(R) CPU E5-2620 v4 @ 2.10GHz.

from turbotransformers.

Interesting. Maybe your CPU is not friendly with Turbo.

from turbotransformers.

@feifeibear I change another machine with CPU Intel(R) Core(TM) i7-7700K CPU @ 4.20GHz, still got similar results.

Please check whether my container start command wrong? Thanks!

from turbotransformers.

I do not think so. Could you please to profile the BERT for more details?

https://github.com/Tencent/TurboTransformers/blob/master/docs/profiler.md

from turbotransformers.

Following codes is the profile code:

import transformers

import torch

import turbo_transformers

num_threads = 4

turbo_transformers.set_num_threads(num_threads)

model_id = "bert-base-uncased"

model = transformers.BertModel.from_pretrained(model_id)

model.eval()

input_ids = torch.tensor(

([12166, 10699, 16752, 4454], [5342, 16471, 817, 16022]),

dtype=torch.long)

position_ids = torch.tensor(([1, 0, 0, 0], [1, 1, 1, 0]), dtype=torch.long)

segment_ids = torch.tensor(([1, 1, 1, 0], [1, 0, 0, 0]), dtype=torch.long)

tt_model = turbo_transformers.BertModel.from_torch(model)

with turbo_transformers.pref_guard("info") as perf:

res = tt_model(input_ids, position_ids=position_ids, token_type_ids=segment_ids)from turbotransformers.

Aha, I find the problem. batch_gemm3 shall not take that much of time.

See my profiling results.

from turbotransformers.

If you are using the MKL as blas provider. It will use cblas_sgemm_batch to do batch_gemm.

see it here.

https://github.com/Tencent/TurboTransformers/blob/master/turbo_transformers/layers/kernels/mat_mul.cpp#L181

In my humble opinion, the reason may come from the mkl provided by PyTorch not suitable for your CPU.

You can follow these instructions to debug.

- Modify CMakeLists and use OpenBLAS as blas provider. (May need to install gfortran in your container)

- Run profiling script again. See if batch_gemm3 return to normal.

- If that works, reinstall MKL using conda (Google for the best command), because OpenBLAS is not the best BLAS on Intel CPU.

from turbotransformers.

I just run the profiling script again. the batch_gemm3 returns to normal without any changes.

ok. I will try OpenBLAS later.

from turbotransformers.

What's happened? Did you share CPU with others? I believe the turbo's performance is quite stable.

from turbotransformers.

I run the benchmark script again. The same result, Turbo is slower than torch. At this time, only I use 4 CPU cores

And onnx_rt_cpu is also slower than torch when the seq_len becomes bigger. Maybe my CPU is not suitable for accelerate model inference.

from turbotransformers.

According to your screenshots, Turbo has already done a good job. Most of time is wasted on GEMM, which is the duty of MKL.

You can set MKL_VERBOSE=1 in your cmd. Obverse routine time diffs between PyTorch and Turbo.

from turbotransformers.

TODO : CPU-version Turbo uses the same MKL conda packages as PyTorch. We should check (1 ) Whether specific MKL versions make turbo slow? (2) Did we use different env variables to damage MKL performance?

from turbotransformers.

Hi @stevewyl , I remake a docker image. Update it to dockerhub.

docker pull thufeifeibear/turbo_transformers_cpu:latest

I found the MKL inside the original docker images will be extremely slow on some CPUs. I reinstall it and it looks better on my own CPU.

from turbotransformers.

Related Issues (20)

- core::Tensor 处理 HOT 1

- 支持自己搭建的Transformer吗? HOT 1

- 请问怎么用tensorflow加载bert呢? HOT 1

- 多卡上会有问题?

- 支持大模型的推理吗 HOT 4

- Transformers版本不一致 HOT 5

- unitest失败 HOT 4

- Getting the same output logits regardless of input tensors HOT 14

- 想问下turbo支持huggingface的bart模型么 HOT 4

- RuntimeError: code is not compiled with CUDA. HOT 3

- MultiHeadedAttention与onmt结果不对应 HOT 1

- Is it easier to implement other model? conformer

- 如何在c++里使用profiler?

- 支持bert的变种吗? HOT 1

- Commercial Support? HOT 2

- Stable version HOT 4

- gpt2推理结果不正确 HOT 4

- C++的build问题 HOT 1

- arm cpu的支持情况。

- Support for ViT and Swin transformer ?

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from turbotransformers.